Cloudnet K8s Deploy 5주차 스터디를 진행하며 정리한 글입니다.

실습 환경

Worker Client-Side LoadBalancing

Kubespray를 통한 K8s 배포

# inventory.ini 확인

root@admin-lb:~/kubespray# cat /root/kubespray/inventory/mycluster/inventory.ini

[kube_control_plane]

k8s-node1 ansible_host=192.168.10.11 ip=192.168.10.11 etcd_member_name=etcd1

k8s-node2 ansible_host=192.168.10.12 ip=192.168.10.12 etcd_member_name=etcd2

k8s-node3 ansible_host=192.168.10.13 ip=192.168.10.13 etcd_member_name=etcd3

[etcd:children]

kube_control_plane

[kube_node]

k8s-node4 ansible_host=192.168.10.14 ip=192.168.10.14

#k8s-node5 ansible_host=192.168.10.15 ip=192.168.10.15

root@admin-lb:~/kubespray# ansible-inventory -i /root/kubespray/inventory/mycluster/inventory.ini --graph

@all:

|--@ungrouped:

|--@etcd:

| |--@kube_control_plane:

| | |--k8s-node1

| | |--k8s-node2

| | |--k8s-node3

|--@kube_node:

| |--k8s-node4

# k8s_cluster.yml # for every node in the cluster (not etcd when it's separate)

root@admin-lb:~/kubespray#

sed -i 's|kube_owner: kube|kube_owner: root|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

sed -i 's|kube_network_plugin: calico|kube_network_plugin: flannel|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

sed -i 's|kube_proxy_mode: ipvs|kube_proxy_mode: iptables|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

sed -i 's|enable_nodelocaldns: true|enable_nodelocaldns: false|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

grep -iE 'kube_owner|kube_network_plugin:|kube_proxy_mode|enable_nodelocaldns:' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

kube_owner: root

kube_network_plugin: flannel

kube_proxy_mode: iptables

enable_nodelocaldns: false

## coredns autoscaler 미설치

root@admin-lb:~/kubespray# echo "enable_dns_autoscaler: false" >> inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

# flannel 설정 수정

root@admin-lb:~/kubespray#

echo "flannel_interface: enp0s9" >> inventory/mycluster/group_vars/k8s_cluster/k8s-net-flannel.yml

grep "^[^#]" inventory/mycluster/group_vars/k8s_cluster/k8s-net-flannel.yml

flannel_interface: enp0s9

# addons

root@admin-lb:~/kubespray#

sed -i 's|metrics_server_enabled: false|metrics_server_enabled: true|g' inventory/mycluster/group_vars/k8s_cluster/addons.yml

grep -iE 'metrics_server_enabled:' inventory/mycluster/group_vars/k8s_cluster/addons.yml

metrics_server_enabled: true

root@admin-lb:~/kubespray#

echo "metrics_server_requests_cpu: 25m" >> inventory/mycluster/group_vars/k8s_cluster/addons.yml

echo "metrics_server_requests_memory: 16Mi" >> inventory/mycluster/group_vars/k8s_cluster/addons.yml

# 지원 버전 정보 확인

root@admin-lb:~/kubespray# cat roles/kubespray_defaults/vars/main/checksums.yml | grep -i kube -A40

kubelet_checksums:

arm64:

1.33.7: sha256:3035c44e0d429946d6b4b66c593d371cf5bbbfc85df39d7e2a03c422e4fe404a

1.33.6: sha256:7d8b7c63309cfe2da2331a1ae13cce070b9ba01e487099e7881a4281667c131d

...

# 배포

root@admin-lb:~/kubespray# ANSIBLE_FORCE_COLOR=true ansible-playbook -i inventory/mycluster/inventory.ini -v cluster.yml -e kube_version="1.32.9" | tee kubespray_install.log

PLAY RECAP *********************************************************************

k8s-node1 : ok=529 changed=120 unreachable=0 failed=0 skipped=829 rescued=0 ignored=2

k8s-node2 : ok=499 changed=111 unreachable=0 failed=0 skipped=822 rescued=0 ignored=2

k8s-node3 : ok=504 changed=112 unreachable=0 failed=0 skipped=827 rescued=0 ignored=2

k8s-node4 : ok=437 changed=87 unreachable=0 failed=0 skipped=615 rescued=0 ignored=0

Saturday 07 February 2026 20:10:16 +0900 (0:00:00.140) 0:21:05.756 *****

===============================================================================

download : Download_file | Download item ------------------------------ 128.68s

download : Download_file | Download item ------------------------------ 111.99s

download : Download_container | Download image if required ------------- 84.87s

download : Download_file | Download item ------------------------------- 75.66s

download : Download_file | Download item ------------------------------- 69.44s

container-engine/containerd : Download_file | Download item ------------ 45.78s

download : Download_container | Download image if required ------------- 41.34s

download : Download_container | Download image if required ------------- 36.79s

download : Download_file | Download item ------------------------------- 36.44s

download : Download_container | Download image if required ------------- 36.40s

download : Download_container | Download image if required ------------- 35.93s

download : Download_file | Download item ------------------------------- 34.58s

container-engine/crictl : Download_file | Download item ---------------- 32.44s

download : Download_container | Download image if required ------------- 30.04s

download : Download_container | Download image if required ------------- 21.85s

download : Download_container | Download image if required ------------- 21.77s

container-engine/runc : Download_file | Download item ------------------ 21.39s

container-engine/nerdctl : Download_file | Download item --------------- 19.07s

download : Download_container | Download image if required ------------- 18.06s

kubernetes/kubeadm : Join to cluster if needed ------------------------- 16.17s

# facts 수집 정보 확인

root@admin-lb:~/kubespray# tree /tmp

/tmp

├── k8s-node1

├── k8s-node2

├── k8s-node3

├── k8s-node4

├── k9s_linux_arm64.tar.gz

├── LICENSE

├── README.md

├── systemd-private-f8d17866e8024c7088868da519e0e4f6-chronyd.service-4DUg97

│ └── tmp

├── systemd-private-f8d17866e8024c7088868da519e0e4f6-dbus-broker.service-mkAcPd

│ └── tmp

├── systemd-private-f8d17866e8024c7088868da519e0e4f6-irqbalance.service-ZXH9m9

│ └── tmp

├── systemd-private-f8d17866e8024c7088868da519e0e4f6-polkit.service-eqUWy2

│ └── tmp

├── systemd-private-f8d17866e8024c7088868da519e0e4f6-systemd-logind.service-KGGBUS

│ └── tmp

└── vagrant-shell

11 directories, 8 files

# etcd 백업 확인

root@admin-lb:~/kubespray# for i in {1..3}; do echo ">> k8s-node$i <<"; ssh k8s-node$i tree /var/backups; echo; done

>> k8s-node1 <<

/var/backups

└── etcd-2026-02-07_20:07:46

├── member

│ ├── snap

│ │ └── db

│ └── wal

│ └── 0000000000000000-0000000000000000.wal

└── snapshot.db

5 directories, 3 files

>> k8s-node2 <<

/var/backups

└── etcd-2026-02-07_20:07:45

├── member

│ ├── snap

│ │ └── db

│ └── wal

│ └── 0000000000000000-0000000000000000.wal

└── snapshot.db

5 directories, 3 files

>> k8s-node3 <<

/var/backups

└── etcd-2026-02-07_20:07:45

├── member

│ ├── snap

│ │ └── db

│ └── wal

│ └── 0000000000000000-0000000000000000.wal

└── snapshot.db

5 directories, 3 files

# k8s api 호출 확인 : IP, Domain

root@admin-lb:~/kubespray# for i in {1..3}; do echo ">> k8s-node$i <<"; curl -sk https://192.168.10.1$i:6443/version | grep Version; echo; done

>> k8s-node1 <<

"gitVersion": "v1.32.9",

"goVersion": "go1.23.12",

>> k8s-node2 <<

"gitVersion": "v1.32.9",

"goVersion": "go1.23.12",

>> k8s-node3 <<

"gitVersion": "v1.32.9",

"goVersion": "go1.23.12",

# k8s admin 자격증명 확인 : 컨트롤 플레인 노드들은 apiserver 파드가 배치되어 있으니 127.0.0.1:6443 엔드포인트 설정됨

root@admin-lb:~/kubespray# for i in {1..3}; do echo ">> k8s-node$i <<"; ssh k8s-node$i kubectl cluster-info -v=6; echo; done

>> k8s-node1 <<

I0207 20:13:00.225468 26612 loader.go:402] Config loaded from file: /root/.kube/config

I0207 20:13:00.226142 26612 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0207 20:13:00.226162 26612 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0207 20:13:00.226166 26612 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0207 20:13:00.226169 26612 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0207 20:13:00.240440 26612 round_trippers.go:560] GET https://127.0.0.1:6443/api/v1/namespaces/kube-system/services?labelSelector=kubernetes.io%2Fcluster-service%3Dtrue 200 OK in 10 milliseconds

Kubernetes control plane is running at https://127.0.0.1:6443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

>> k8s-node2 <<

I0207 20:13:00.590682 25937 loader.go:402] Config loaded from file: /root/.kube/config

I0207 20:13:00.593036 25937 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0207 20:13:00.593115 25937 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0207 20:13:00.593126 25937 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0207 20:13:00.593129 25937 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0207 20:13:00.602801 25937 round_trippers.go:560] GET https://127.0.0.1:6443/api?timeout=32s 200 OK in 9 milliseconds

I0207 20:13:00.605851 25937 round_trippers.go:560] GET https://127.0.0.1:6443/apis?timeout=32s 200 OK in 1 milliseconds

I0207 20:13:00.621066 25937 round_trippers.go:560] GET https://127.0.0.1:6443/api/v1/namespaces/kube-system/services?labelSelector=kubernetes.io%2Fcluster-service%3Dtrue 200 OK in 7 milliseconds

Kubernetes control plane is running at https://127.0.0.1:6443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

>> k8s-node3 <<

I0207 20:13:01.031387 26097 loader.go:402] Config loaded from file: /root/.kube/config

I0207 20:13:01.031962 26097 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0207 20:13:01.031979 26097 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0207 20:13:01.031982 26097 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0207 20:13:01.031985 26097 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0207 20:13:01.051724 26097 round_trippers.go:560] GET https://127.0.0.1:6443/api/v1/namespaces/kube-system/services?labelSelector=kubernetes.io%2Fcluster-service%3Dtrue 200 OK in 12 milliseconds

Kubernetes control plane is running at https://127.0.0.1:6443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

root@admin-lb:~/kubespray# mkdir /root/.kube

root@admin-lb:~/kubespray# scp k8s-node1:/root/.kube/config /root/.kube/

root@admin-lb:~/kubespray# cat /root/.kube/config | grep server

config 100% 5665 4.3MB/s 00:00

server: https://127.0.0.1:6443

# API Server 주소를 localhost에서 컨트롤 플레인 1번 node IP로 변경 : 1번 node 장애 시, 직접 수동으로 다른 node IP 변경 필요.

root@admin-lb:~/kubespray# kubectl get node -owide -v=6

I0207 20:15:23.672088 13886 loader.go:402] Config loaded from file: /root/.kube/config

I0207 20:15:23.672737 13886 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0207 20:15:23.672754 13886 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0207 20:15:23.672844 13886 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0207 20:15:23.672850 13886 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0207 20:15:23.682914 13886 round_trippers.go:560] GET https://127.0.0.1:6443/api?timeout=32s 200 OK in 9 milliseconds

I0207 20:15:23.686566 13886 round_trippers.go:560] GET https://127.0.0.1:6443/apis?timeout=32s 200 OK in 2 milliseconds

I0207 20:15:23.706069 13886 round_trippers.go:560] GET https://127.0.0.1:6443/api/v1/nodes?limit=500 200 OK in 10 milliseconds

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-node1 Ready control-plane 7m2s v1.32.9 192.168.10.11 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node2 Ready control-plane 6m51s v1.32.9 192.168.10.12 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node3 Ready control-plane 6m47s v1.32.9 192.168.10.13 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node4 Ready <none> 6m13s v1.32.9 192.168.10.14 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

root@admin-lb:~/kubespray# sed -i 's/127.0.0.1/192.168.10.11/g' /root/.kube/config

root@admin-lb:~/kubespray# kubectl get node -owide -v=6

I0207 20:15:45.768176 13892 loader.go:402] Config loaded from file: /root/.kube/config

I0207 20:15:45.771057 13892 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0207 20:15:45.771100 13892 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0207 20:15:45.771105 13892 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0207 20:15:45.771109 13892 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0207 20:15:45.786541 13892 round_trippers.go:560] GET https://192.168.10.11:6443/api?timeout=32s 200 OK in 15 milliseconds

I0207 20:15:45.793527 13892 round_trippers.go:560] GET https://192.168.10.11:6443/apis?timeout=32s 200 OK in 3 milliseconds

I0207 20:15:45.812924 13892 round_trippers.go:560] GET https://192.168.10.11:6443/api/v1/nodes?limit=500 200 OK in 8 milliseconds

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-node1 Ready control-plane 7m24s v1.32.9 192.168.10.11 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node2 Ready control-plane 7m13s v1.32.9 192.168.10.12 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node3 Ready control-plane 7m9s v1.32.9 192.168.10.13 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node4 Ready <none> 6m35s v1.32.9 192.168.10.14 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

# 노드별 파드 CIDR 확인

root@admin-lb:~/kubespray# kubectl get nodes -o jsonpath='{range .items[*]}{.metadata.name}{"\t"}{.spec.podCIDR}{"\n"}{end}'

k8s-node1 10.233.64.0/24

k8s-node2 10.233.65.0/24

k8s-node3 10.233.66.0/24

k8s-node4 10.233.67.0/24

# etcd 정보 확인 : etcd name 확인

root@admin-lb:~/kubespray# ssh k8s-node1 etcdctl.sh member list -w table

+------------------+---------+-------+----------------------------+----------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+-------+----------------------------+----------------------------+------------+

| 8b0ca30665374b0 | started | etcd3 | https://192.168.10.13:2380 | https://192.168.10.13:2379 | false |

| 2106626b12a4099f | started | etcd2 | https://192.168.10.12:2380 | https://192.168.10.12:2379 | false |

| c6702130d82d740f | started | etcd1 | https://192.168.10.11:2380 | https://192.168.10.11:2379 | false |

+------------------+---------+-------+----------------------------+----------------------------+------------+

root@admin-lb:~/kubespray# for i in {1..3}; do echo ">> k8s-node$i <<"; ssh k8s-node$i etcdctl.sh endpoint status -w table; echo; done

>> k8s-node1 <<

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | c6702130d82d740f | 3.5.25 | 5.6 MB | true | false | 4 | 2687 | 2687 | |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

>> k8s-node2 <<

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | 2106626b12a4099f | 3.5.25 | 5.6 MB | false | false | 4 | 2687 | 2687 | |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

>> k8s-node3 <<

+----------------+-----------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+-----------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | 8b0ca30665374b0 | 3.5.25 | 5.6 MB | false | false | 4 | 2687 | 2687 | |

+----------------+-----------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

# control 컴포넌트 확인

# 인증서 정보 확인

# 인증서 정보 확인 : ctrl1번 노드만 super-admin.conf 확인

root@admin-lb:~/kubespray# for i in {1..3}; do echo ">> k8s-node$i <<"; ssh k8s-node$i ls -l /etc/kubernetes/super-admin.conf ; echo; done

>> k8s-node1 <<

-rw-------. 1 root root 5693 Feb 7 20:08 /etc/kubernetes/super-admin.conf

>> k8s-node2 <<

ls: cannot access '/etc/kubernetes/super-admin.conf': No such file or directory

>> k8s-node3 <<

ls: cannot access '/etc/kubernetes/super-admin.conf': No such file or directory

# kubespray task에 의해서 호스트에서도 서비스명 도메인 질의를 위해 ns 최상단 추가 등 확인

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/resolv.conf

# Generated by NetworkManager

search default.svc.cluster.local svc.cluster.local

nameserver 10.233.0.3

nameserver 168.126.63.1

nameserver 168.126.63.2

options ndots:2 timeout:2 attempts:2

## 현재 k8s join 되지 않는 노드는 기본 dns 설정 상태

root@admin-lb:~/kubespray# ssh k8s-node5 cat /etc/resolv.conf

# Generated by NetworkManager

nameserver 168.126.63.1

nameserver 168.126.63.2

# kubeadm 과 동일하게 kubelet node 최초 join 시 CSR 사용 확인

root@admin-lb:~/kubespray# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR REQUESTEDDURATION CONDITION

csr-6k48r 19m kubernetes.io/kube-apiserver-client-kubelet system:node:k8s-node1 <none> Approved,Issued

csr-gb9vm 19m kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:kum2ky <none> Approved,Issued

csr-knbps 19m kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:exiimk <none> Approved,Issued

csr-s67nd 18m kubernetes.io/kube-apiserver-client-kubelet system:bootstrap:qfuixm <none> Approved,Issued

K8s API 엔드포인트

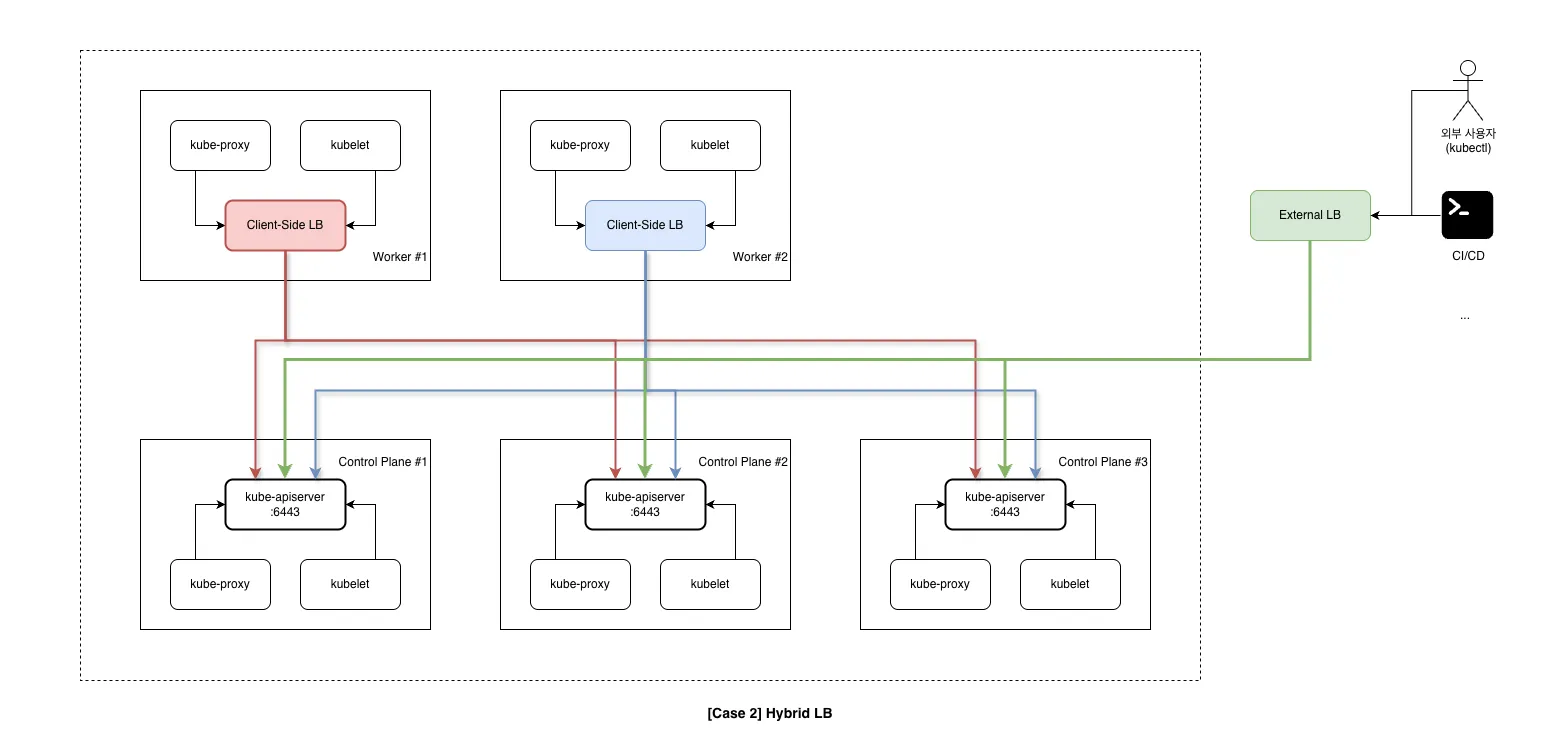

[Case1] HA 컨트플 플레인 노드(3대) + (Worker Client-Side LoadBalancing)

# worker(kubeclt, kube-proxy) -> k8s api

# 워커노드에서 정보 확인

root@admin-lb:~/kubespray# ssh k8s-node4 crictl ps

CONTAINER IMAGE CREATED STATE NAME ATTEMPT POD ID POD NAMESPACE

5a8890e37eb3e bc6c1e09a843d 22 minutes ago Running metrics-server 0 3378bf7da8a54 metrics-server-65fdf69dcb-22c5b kube-system

62cc3eb40ea31 2f6c962e7b831 23 minutes ago Running coredns 0 91d5017d28880 coredns-664b99d7c7-pztdv kube-system

0269a1164273d cadcae92e6360 23 minutes ago Running kube-flannel 0 e31417e29224a kube-flannel-ds-arm64-7cvdk kube-system

080f1a580e301 72b57ec14d31e 23 minutes ago Running kube-proxy 0 975654efc1267 kube-proxy-ngmpt kube-system

05ea62f151eb2 5a91d90f47ddf 23 minutes ago Running nginx-proxy 0 0529e6ee0ed27 nginx-proxy-k8s-node4 kube-system

root@admin-lb:~/kubespray# ssh k8s-node4 cat /etc/nginx/nginx.conf

error_log stderr notice;

worker_processes 2;

worker_rlimit_nofile 130048;

worker_shutdown_timeout 10s;

events {

multi_accept on;

use epoll;

worker_connections 16384;

}

stream {

upstream kube_apiserver {

least_conn;

server 192.168.10.11:6443;

server 192.168.10.12:6443;

server 192.168.10.13:6443;

}

server {

listen 127.0.0.1:6443;

proxy_pass kube_apiserver;

proxy_timeout 10m;

proxy_connect_timeout 1s;

}

}

http {

aio threads;

aio_write on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 5m;

keepalive_requests 100;

reset_timedout_connection on;

server_tokens off;

autoindex off;

server {

listen 8081;

location /healthz {

access_log off;

return 200;

}

location /stub_status {

stub_status on;

access_log off;

}

}

}

root@admin-lb:~/kubespray# ssh k8s-node4 curl -s localhost:8081/healthz -I

HTTP/1.1 200 OK

Server: nginx

Date: Sat, 07 Feb 2026 11:34:11 GMT

Content-Type: text/plain

Content-Length: 0

Connection: keep-alive

root@admin-lb:~/kubespray# ssh k8s-node4 curl -sk https://127.0.0.1:6443/version | grep Version

"gitVersion": "v1.32.9",

"goVersion": "go1.23.12",

root@admin-lb:~/kubespray# ssh k8s-node4 ss -tnlp | grep nginx

LISTEN 0 511 0.0.0.0:8081 0.0.0.0:* users:(("nginx",pid=15051,fd=6),("nginx",pid=15050,fd=6),("nginx",pid=15023,fd=6))

LISTEN 0 511 127.0.0.1:6443 0.0.0.0:* users:(("nginx",pid=15051,fd=5),("nginx",pid=15050,fd=5),("nginx",pid=15023,fd=5))

# kubelet(client) -> api-server 호출 시 엔드포인트 정보 확인 : https://localhost:6443

root@admin-lb:~/kubespray# ssh k8s-node4 cat /etc/kubernetes/kubelet.conf

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURCVENDQWUyZ0F3SUJBZ0lJYkQzaEF0Si9CYk13RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TmpBeU1EY3hNVEF6TVRWYUZ3MHpOakF5TURVeE1UQTRNVFZhTUJVeApFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLCkFvSUJBUUNmOVlOVklZcnVPSVlmUVFlUnB3em1ORjdyb0lxTlhYT29IWC84U0RhcTUzN1Y4SkJnaUl3L3MwWmgKZ04vekk3N28rV1dTZ3Y0UzVIR3NIR29XWnhRNE9xejAvRS9jUFRJK1Q5ZzFhZFBXSDNHSFN6WS8wNWkya1hzagpsNGtHV0xiTVZYRHlMNW5jZFJpY3N2a2ZqTVJzQVpld2tXK0taUjl2aGJMTXh5M0tqUEd5cS9VVVJtTE1JbUxEClg2WHY4MHZVd2h5RjNUaEFCVExGRjE2Um9BaEJlTW53c3RvdkVDelVVQVExOTRlbFFHL2NhWXZsREl1VkVGcGQKckxxY29FdG5YRHp3dzN1SXUzaVdSZjg3Q2VCMVUyY1hrUmllY1k4elh0ZHJST2FpKzl5ZHRxMEkwOGExazN6ZQpVNDVTYUVlaUdQaGVUSWcvVVdEcDJqYzRBSXI5QWdNQkFBR2pXVEJYTUE0R0ExVWREd0VCL3dRRUF3SUNwREFQCkJnTlZIUk1CQWY4RUJUQURBUUgvTUIwR0ExVWREZ1FXQkJUREwwRVh4d3FaK2ttOEJwYnNPL3JpQXJtSjV6QVYKQmdOVkhSRUVEakFNZ2dwcmRXSmxjbTVsZEdWek1BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQnJOVUVyZWp4MwpHN0F4WmtwODFqRVBxbk5LV3BoV2Jkc1ZKc3JDU1VySGRwTWkvNm9vZ0VSQ2tHdXArV1RheTVneFU3M3krVStsCjZIUTltcytQRlo5VU4zUjVYOTJZRkU4bmZhQXhvM01YeXFrd2JGTW5ISWlqSS9vMGMzZk9ObFRFWC9URm9wSDEKSVM1eWRxZ3Fxd0k4VmZkeHJ5Ui9LUHVFS3JVQ0lpcGVaVFc0RHRnMGZiY0VBMVJ2UEpoVXhQOHppTTNCYmFRdApUcDZlZHRJYjFqaDREUmxLOXUvcHQrR2R3ZWxNSVpTRHhXc3JGTGNSVEd6R2xFRnBMbFBFNVF4SnB4bVcwTWdxCk1PSkRHQ0NETVc5a2tjeEs0SnVjaG10bEwzYngwVHdVMWsrTXhoSE05YVcrT1dMOEgrRU14RnVyMFNnVlZGU2oKVW9zQU9KdURYMXNLCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://localhost:6443

name: default-cluster

contexts:

- context:

cluster: default-cluster

namespace: default

user: default-auth

name: default-context

current-context: default-context

kind: Config

preferences: {}

users:

- name: default-auth

user:

client-certificate: /var/lib/kubelet/pki/kubelet-client-current.pem

client-key: /var/lib/kubelet/pki/kubelet-client-current.pem

# kube-proxy(client) -> api-server 호출 시 엔드포인트 정보 확인

root@admin-lb:~/kubespray# kc get cm -n kube-system kube-proxy -o yaml

apiVersion: v1

data:

config.conf: |-

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

bindAddressHardFail: false

clientConnection:

acceptContentTypes: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /var/lib/kube-proxy/kubeconfig.conf

qps: 5

clusterCIDR: 10.233.64.0/18

configSyncPeriod: 15m0s

conntrack:

maxPerCore: 32768

min: 131072

tcpBeLiberal: false

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

udpStreamTimeout: 0s

udpTimeout: 0s

detectLocal:

bridgeInterface: ""

interfaceNamePrefix: ""

detectLocalMode: ""

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: k8s-node1

iptables:

localhostNodePorts: null

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

syncPeriod: 30s

ipvs:

excludeCIDRs: []

minSyncPeriod: 0s

scheduler: rr

strictARP: false

syncPeriod: 30s

tcpFinTimeout: 0s

tcpTimeout: 0s

udpTimeout: 0s

kind: KubeProxyConfiguration

logging:

flushFrequency: 0

options:

json:

infoBufferSize: "0"

text:

infoBufferSize: "0"

verbosity: 0

metricsBindAddress: 127.0.0.1:10249

mode: iptables

nftables:

masqueradeAll: false

masqueradeBit: null

minSyncPeriod: 0s

syncPeriod: 0s

nodePortAddresses: []

oomScoreAdj: -999

portRange: ""

showHiddenMetricsForVersion: ""

winkernel:

enableDSR: false

forwardHealthCheckVip: false

networkName: ""

rootHnsEndpointName: ""

sourceVip: ""

kubeconfig.conf: |-

apiVersion: v1

kind: Config

clusters:

- cluster:

certificate-authority: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

server: https://127.0.0.1:6443

name: default

contexts:

- context:

cluster: default

namespace: default

user: default

name: default

current-context: default

users:

- name: default

user:

tokenFile: /var/run/secrets/kubernetes.io/serviceaccount/token

kind: ConfigMap

metadata:

creationTimestamp: "2026-02-07T11:08:24Z"

labels:

app: kube-proxy

name: kube-proxy

namespace: kube-system

resourceVersion: "686"

uid: 75f71f01-34a8-4a84-92b4-a823f4032c7c

root@admin-lb:~/kubespray# kubectl get cm -n kube-system kube-proxy -o yaml | grep 'kubeconfig.conf:' -A18

kubeconfig.conf: |-

apiVersion: v1

kind: Config

clusters:

- cluster:

certificate-authority: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

server: https://127.0.0.1:6443

name: default

contexts:

- context:

cluster: default

namespace: default

user: default

name: default

current-context: default

users:

- name: default

user:

tokenFile: /var/run/secrets/kubernetes.io/serviceaccount/token

# nginx.conf 생성 Task

root@admin-lb:~/kubespray# tree roles/kubernetes/node/tasks/loadbalancer

roles/kubernetes/node/tasks/loadbalancer

├── haproxy.yml

├── kube-vip.yml

└── nginx-proxy.yml

1 directory, 3 files

root@admin-lb:~/kubespray# cat roles/kubernetes/node/tasks/loadbalancer/nginx-proxy.yml

---

- name: Haproxy | Cleanup potentially deployed haproxy

file:

path: "{{ kube_manifest_dir }}/haproxy.yml"

state: absent

- name: Nginx-proxy | Make nginx directory

file:

path: "{{ nginx_config_dir }}"

state: directory

mode: "0700"

owner: root

- name: Nginx-proxy | Write nginx-proxy configuration

template:

src: "loadbalancer/nginx.conf.j2"

dest: "{{ nginx_config_dir }}/nginx.conf"

owner: root

mode: "0755"

backup: true

- name: Nginx-proxy | Get checksum from config

stat:

path: "{{ nginx_config_dir }}/nginx.conf"

get_attributes: false

get_checksum: true

get_mime: false

register: nginx_stat

- name: Nginx-proxy | Write static pod

template:

src: manifests/nginx-proxy.manifest.j2

dest: "{{ kube_manifest_dir }}/nginx-proxy.yml"

mode: "0640"

# nginx.conf jinja2 템플릿 파일

root@admin-lb:~/kubespray# cat roles/kubernetes/node/templates/loadbalancer/nginx.conf.j2

error_log stderr notice;

worker_processes 2;

worker_rlimit_nofile 130048;

worker_shutdown_timeout 10s;

events {

multi_accept on;

use epoll;

worker_connections 16384;

}

stream {

upstream kube_apiserver {

least_conn;

{% for host in groups['kube_control_plane'] -%}

server {{ hostvars[host]['main_access_ip'] | ansible.utils.ipwrap }}:{{ kube_apiserver_port }};

{% endfor -%}

}

server {

listen 127.0.0.1:{{ loadbalancer_apiserver_port|default(kube_apiserver_port) }};

{% if ipv6_stack -%}

listen [::1]:{{ loadbalancer_apiserver_port|default(kube_apiserver_port) }};

{% endif -%}

proxy_pass kube_apiserver;

proxy_timeout 10m;

proxy_connect_timeout 1s;

}

}

http {

aio threads;

aio_write on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout {{ loadbalancer_apiserver_keepalive_timeout }};

keepalive_requests 100;

reset_timedout_connection on;

server_tokens off;

autoindex off;

{% if loadbalancer_apiserver_healthcheck_port is defined -%}

server {

listen {{ loadbalancer_apiserver_healthcheck_port }};

{% if ipv6_stack -%}

listen [::]:{{ loadbalancer_apiserver_healthcheck_port }};

{% endif -%}

location /healthz {

access_log off;

return 200;

}

location /stub_status {

stub_status on;

access_log off;

}

}

{% endif %}

}

# nginx static pod 매니페스트 파일 확인

root@admin-lb:~/kubespray# cat roles/kubernetes/node/templates/manifests/nginx-proxy.manifest.j2

apiVersion: v1

kind: Pod

metadata:

name: {{ loadbalancer_apiserver_pod_name }}

namespace: kube-system

labels:

addonmanager.kubernetes.io/mode: Reconcile

k8s-app: kube-nginx

annotations:

nginx-cfg-checksum: "{{ nginx_stat.stat.checksum }}"

spec:

hostNetwork: true

dnsPolicy: ClusterFirstWithHostNet

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-node-critical

containers:

- name: nginx-proxy

image: {{ nginx_image_repo }}:{{ nginx_image_tag }}

imagePullPolicy: {{ k8s_image_pull_policy }}

resources:

requests:

cpu: {{ loadbalancer_apiserver_cpu_requests }}

memory: {{ loadbalancer_apiserver_memory_requests }}

{% if loadbalancer_apiserver_healthcheck_port is defined -%}

livenessProbe:

httpGet:

path: /healthz

port: {{ loadbalancer_apiserver_healthcheck_port }}

readinessProbe:

httpGet:

path: /healthz

port: {{ loadbalancer_apiserver_healthcheck_port }}

{% endif -%}

volumeMounts:

- mountPath: /etc/nginx

name: etc-nginx

readOnly: true

volumes:

- name: etc-nginx

hostPath:

path: {{ nginx_config_dir }}

# nginx log 중 alert 해결 : --tags "containerd" 사용

root@admin-lb:~/kubespray# kubectl logs -n kube-system nginx-proxy-k8s-node4

/docker-entrypoint.sh: /docker-entrypoint.d/ is not empty, will attempt to perform configuration

/docker-entrypoint.sh: Looking for shell scripts in /docker-entrypoint.d/

/docker-entrypoint.sh: Launching /docker-entrypoint.d/10-listen-on-ipv6-by-default.sh

10-listen-on-ipv6-by-default.sh: info: /etc/nginx/conf.d/default.conf is not a file or does not exist

/docker-entrypoint.sh: Sourcing /docker-entrypoint.d/15-local-resolvers.envsh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/20-envsubst-on-templates.sh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/30-tune-worker-processes.sh

/docker-entrypoint.sh: Configuration complete; ready for start up

2026/02/07 11:09:10 [notice] 1#1: using the "epoll" event method

2026/02/07 11:09:10 [notice] 1#1: nginx/1.28.0

2026/02/07 11:09:10 [notice] 1#1: built by gcc 14.2.0 (Alpine 14.2.0)

2026/02/07 11:09:10 [notice] 1#1: OS: Linux 6.12.0-55.39.1.el10_0.aarch64

2026/02/07 11:09:10 [notice] 1#1: getrlimit(RLIMIT_NOFILE): 65535:65535

2026/02/07 11:09:10 [notice] 1#1: start worker processes

2026/02/07 11:09:10 [notice] 1#1: start worker process 20

2026/02/07 11:09:10 [notice] 1#1: start worker process 21

2026/02/07 11:09:10 [alert] 20#20: setrlimit(RLIMIT_NOFILE, 130048) failed (1: Operation not permitted)

2026/02/07 11:09:10 [alert] 21#21: setrlimit(RLIMIT_NOFILE, 130048) failed (1: Operation not permitted)

2026/02/07 11:10:15 [error] 20#20: *49 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.10.13:6443", bytes from/to client:1489/0, bytes from/to upstream:0/1489

root@admin-lb:~/kubespray# ssh k8s-node4 cat /etc/nginx/nginx.conf

error_log stderr notice;

worker_processes 2;

worker_rlimit_nofile 130048;

worker_shutdown_timeout 10s;

events {

multi_accept on;

use epoll;

worker_connections 16384;

}

stream {

upstream kube_apiserver {

least_conn;

server 192.168.10.11:6443;

server 192.168.10.12:6443;

server 192.168.10.13:6443;

}

server {

listen 127.0.0.1:6443;

proxy_pass kube_apiserver;

proxy_timeout 10m;

proxy_connect_timeout 1s;

}

}

http {

aio threads;

aio_write on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 5m;

keepalive_requests 100;

reset_timedout_connection on;

server_tokens off;

autoindex off;

server {

listen 8081;

location /healthz {

access_log off;

return 200;

}

location /stub_status {

stub_status on;

access_log off;

}

}

}

root@admin-lb:~/kubespray# ssh k8s-node4 cat /etc/containerd/config.toml | grep base_runtime_spec

base_runtime_spec = "/etc/containerd/cri-base.json"

root@admin-lb:~/kubespray# ssh k8s-node4 cat /etc/containerd/cri-base.json | jq | grep rlimits -A 6

"rlimits": [

{

"type": "RLIMIT_NOFILE",

"hard": 65535,

"soft": 65535

}

],

# 관련 변수명 확인

root@admin-lb:~/kubespray# cat roles/container-engine/containerd/defaults/main.yml

...

containerd_base_runtime_spec_rlimit_nofile: 65535

containerd_default_base_runtime_spec_patch:

process:

rlimits:

- type: RLIMIT_NOFILE

hard: "{{ containerd_base_runtime_spec_rlimit_nofile }}"

soft: "{{ containerd_base_runtime_spec_rlimit_nofile }}"

# 기본 OCI Spec(Runtime Spec)을 수정(Patch)

root@admin-lb:~/kubespray# cat << EOF >> inventory/mycluster/group_vars/all/containerd.yml

containerd_default_base_runtime_spec_patch:

process:

rlimits: []

EOF

root@admin-lb:~/kubespray# grep "^[^#]" inventory/mycluster/group_vars/all/containerd.yml

---

containerd_default_base_runtime_spec_patch:

process:

rlimits: []

# (신규터미널) 모니터링

root@k8s-node4:~# crictl pods --namespace kube-system --name 'nginx-proxy-*' -q | xargs crictl rmp -f

Stopped sandbox 0529e6ee0ed270e3c19c8d4b8f35387291e172e3ffbd64987e6a3707b0e221e8

Removed sandbox 0529e6ee0ed270e3c19c8d4b8f35387291e172e3ffbd64987e6a3707b0e221e8

root@k8s-node4:~# ssh k8s-node4 crictl inspect --name nginx-proxy | grep rlimits -A6

root@k8s-node4's password:

"rlimits": [

{

"hard": 65535,

"soft": 65535,

"type": "RLIMIT_NOFILE"

}

],

root@admin-lb:~/kubespray# kubectl logs -n kube-system nginx-proxy-k8s-node4 -f

/docker-entrypoint.sh: /docker-entrypoint.d/ is not empty, will attempt to perform configuration

/docker-entrypoint.sh: Looking for shell scripts in /docker-entrypoint.d/

/docker-entrypoint.sh: Launching /docker-entrypoint.d/10-listen-on-ipv6-by-default.sh

10-listen-on-ipv6-by-default.sh: info: /etc/nginx/conf.d/default.conf is not a file or does not exist

/docker-entrypoint.sh: Sourcing /docker-entrypoint.d/15-local-resolvers.envsh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/20-envsubst-on-templates.sh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/30-tune-worker-processes.sh

/docker-entrypoint.sh: Configuration complete; ready for start up

2026/02/07 13:30:02 [notice] 1#1: using the "epoll" event method

2026/02/07 13:30:02 [notice] 1#1: nginx/1.28.0

2026/02/07 13:30:02 [notice] 1#1: built by gcc 14.2.0 (Alpine 14.2.0)

2026/02/07 13:30:02 [notice] 1#1: OS: Linux 6.12.0-55.39.1.el10_0.aarch64

2026/02/07 13:30:02 [notice] 1#1: getrlimit(RLIMIT_NOFILE): 65535:65535

2026/02/07 13:30:02 [notice] 1#1: start worker processes

2026/02/07 13:30:02 [notice] 1#1: start worker process 20

2026/02/07 13:30:02 [alert] 20#20: setrlimit(RLIMIT_NOFILE, 130048) failed (1: Operation not permitted)

2026/02/07 13:30:02 [notice] 1#1: start worker process 21

[Case2] External LB → HA 컨트플 플레인 노드(3대) + (Worker Client-Side LoadBalancing)

# apiserver static 파드의 bind-address 에 '::' 확인

root@admin-lb:~/kubespray# kubectl describe pod -n kube-system kube-apiserver-k8s-node1 | grep -E 'address|secure-port'

Annotations: kubeadm.kubernetes.io/kube-apiserver.advertise-address.endpoint: 192.168.10.11:6443

--advertise-address=192.168.10.11

--bind-address=::

--kubelet-preferred-address-types=InternalDNS,InternalIP,Hostname,ExternalDNS,ExternalIP

--secure-port=6443

root@admin-lb:~/kubespray# ssh k8s-node1 ss -tnlp | grep 6443

LISTEN 0 4096 *:6443 *:* users:(("kube-apiserver",pid=25837,fd=3))

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/admin.conf | grep server

server: https://127.0.0.1:6443

# admin 자격증명(client) -> api-server 호출 시 엔드포인트 정보 확인

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/admin.conf | grep server

server: https://127.0.0.1:6443

# super-admin 자격증명(client) -> api-server 호출 시 엔드포인트 정보 확인

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/super-admin.conf | grep server

server: https://192.168.10.11:6443

# kubelet(client) -> api-server 호출 시 엔드포인트 정보 확인 : https://127.0.0.1:6443

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/kubelet.conf | grep server

server: https://127.0.0.1:6443

# kube-proxy(client) -> api-server 호출 시 엔드포인트 정보 확인

root@admin-lb:~/kubespray# k get cm -n kube-system kube-proxy -o yaml | grep server

server: https://127.0.0.1:6443

# kube-controller-manager(client) -> api-server 호출 시 엔드포인트 정보 확인

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/controller-manager.conf | grep server

server: https://127.0.0.1:6443

# kube-scheduler(client) -> api-server 호출 시 엔드포인트 정보 확인

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/scheduler.conf | grep server

server: https://127.0.0.1:6443

Cilium 과 같은 데몬셋에서 배포된 파드들이 k8s api endpoint 호출을 위해서, 동일한 127.0.0.1::6443 로컬 엔드포인트도 사용 가능합니다.

# kube-ops-view 설치

root@admin-lb:~/kubespray# helm repo add geek-cookbook https://geek-cookbook.github.io/charts/

"geek-cookbook" has been added to your repositories

root@admin-lb:~/kubespray# helm install kube-ops-view geek-cookbook/kube-ops-view --version 1.2.2 \

--set service.main.type=NodePort,service.main.ports.http.nodePort=30000 \

--set env.TZ="Asia/Seoul" --namespace kube-system \

--set image.repository="abihf/kube-ops-view" --set image.tag="latest"

NAME: kube-ops-view

LAST DEPLOYED: Sat Feb 7 22:39:40 2026

NAMESPACE: kube-system

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

1. Get the application URL by running these commands:

export NODE_PORT=$(kubectl get --namespace kube-system -o jsonpath="{.spec.ports[0].nodePort}" services kube-ops-view)

export NODE_IP=$(kubectl get nodes --namespace kube-system -o jsonpath="{.items[0].status.addresses[0].address}")

echo http://$NODE_IP:$NODE_PORT

root@admin-lb:~/kubespray# kubectl get deploy,pod,svc,ep -n kube-system -l app.kubernetes.io/instance=kube-ops-view

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/kube-ops-view 0/1 1 0 7s

NAME READY STATUS RESTARTS AGE

pod/kube-ops-view-8484bdc5df-zz47d 0/1 ContainerCreating 0 7s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kube-ops-view NodePort 10.233.49.26 <none> 8080:30000/TCP 7s

NAME ENDPOINTS AGE

endpoints/kube-ops-view <none> 7s

# 샘플 애플리케이션 배포, 반복 호출

root@admin-lb:~/kubespray# cat << EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: webpod

spec:

replicas: 2

selector:

matchLabels:

app: webpod

template:

metadata:

labels:

app: webpod

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- sample-app

topologyKey: "kubernetes.io/hostname"

containers:

- name: webpod

image: traefik/whoami

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: webpod

labels:

app: webpod

EOFype: NodePort003

deployment.apps/webpod created

service/webpod created

# 배포 확인

root@admin-lb:~/kubespray# kubectl get deploy,svc,ep webpod -owide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

deployment.apps/webpod 2/2 2 2 28s webpod traefik/whoami app=webpod

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/webpod NodePort 10.233.11.3 <none> 80:30003/TCP 27s app=webpod

NAME ENDPOINTS AGE

endpoints/webpod 10.233.67.5:80,10.233.67.6:80 28s

[admin-lb] # IP는 node 작업에 따라 변경

root@admin-lb:~/kubespray# while true; do curl -s http://192.168.10.14:30003 | grep Hostname; sleep 1; done

Hostname: webpod-697b545f57-srd2b

Hostname: webpod-697b545f57-srd2b

Hostname: webpod-697b545f57-srd2b

Hostname: webpod-697b545f57-srd2b

# 성공

root@admin-lb:~/kubespray# ssh k8s-node1 curl -s webpod -I

HTTP/1.1 200 OK

Date: Sat, 07 Feb 2026 13:41:49 GMT

Content-Length: 202

Content-Type: text/plain; charset=utf-8

root@admin-lb:~/kubespray# ssh k8s-node1 curl -s webpod.default -I

HTTP/1.1 200 OK

Date: Sat, 07 Feb 2026 13:42:09 GMT

Content-Length: 210

Content-Type: text/plain; charset=utf-8

# 실패

root@admin-lb:~/kubespray# ssh k8s-node1 curl -s webpod.default.svc -I

root@admin-lb:~/kubespray# ssh k8s-node1 curl -s webpod.default.svc.cluster -I

# 장애 재현 만약 컨트롤 플레인 1번 노드 장애 발생 시 영향도

# [admin-lb] kubeconfig 자격증명 사용 시 정보 확인

root@admin-lb:~/kubespray# cat /root/.kube/config | grep server

server: https://192.168.10.11:6443

Every 2.0s: kubectl get pod -n kube-system k8s-node2: Sat Feb 7 22:44:20 2026

## [k8s-node2]

NAME READY STATUS RESTARTS AGE

coredns-664b99d7c7-pztdv 1/1 Running 0 154m

coredns-664b99d7c7-r4nzz 1/1 Running 0 154m

kube-apiserver-k8s-node1 1/1 Running 1 155m

kube-apiserver-k8s-node2 1/1 Running 1 155m

kube-apiserver-k8s-node3 1/1 Running 1 155m

kube-controller-manager-k8s-node1 1/1 Running 2 155m

kube-controller-manager-k8s-node2 1/1 Running 2 155m

kube-controller-manager-k8s-node3 1/1 Running 2 155m

kube-flannel-ds-arm64-2mwzk 1/1 Running 0 154m

kube-flannel-ds-arm64-7cvdk 1/1 Running 0 154m

kube-flannel-ds-arm64-fss94 1/1 Running 0 154m

kube-flannel-ds-arm64-p87lw 1/1 Running 0 154m

kube-ops-view-8484bdc5df-zz47d 1/1 Running 0 4m46s

kube-proxy-8pjq2 1/1 Running 0 155m

kube-proxy-bfbbn 1/1 Running 0 155m

kube-proxy-ngmpt 1/1 Running 0 155m

kube-proxy-twxh7 1/1 Running 0 155m

kube-scheduler-k8s-node1 1/1 Running 1 155m

kube-scheduler-k8s-node2 1/1 Running 1 155m

kube-scheduler-k8s-node3 1/1 Running 1 155m

metrics-server-65fdf69dcb-22c5b 1/1 Running 0 154m

nginx-proxy-k8s-node4 1/1 Running 1 155m

root@k8s-node1:~# poweroff

Every 2.0s: kubectl get pod -n kube-system k8s-node2: Sat Feb 7 22:49:57 2026

NAME READY STATUS RESTARTS AGE

coredns-664b99d7c7-pztdv 1/1 Running 0 160m

coredns-664b99d7c7-r4nzz 1/1 Running 0 160m

kube-apiserver-k8s-node1 1/1 Terminated 1 161m

kube-apiserver-k8s-node2 1/1 Running 1 161m

kube-apiserver-k8s-node3 1/1 Running 1 161m

kube-controller-manager-k8s-node1 1/1 Terminated 2 161m

kube-controller-manager-k8s-node2 1/1 Running 2 161m

kube-controller-manager-k8s-node3 1/1 Running 2 161m

kube-flannel-ds-arm64-2mwzk 1/1 Running 0 160m

kube-flannel-ds-arm64-7cvdk 1/1 Running 0 160m

kube-flannel-ds-arm64-fss94 1/1 Running 0 160m

kube-flannel-ds-arm64-p87lw 1/1 Running 0 160m

kube-ops-view-8484bdc5df-zz47d 1/1 Running 0 10m

kube-proxy-8pjq2 1/1 Running 0 160m

root@k8s-node2:~# kubectl logs -n kube-system nginx-proxy-k8s-node4 -f

/docker-entrypoint.sh: /docker-entrypoint.d/ is not empty, will attempt to perform configuration

/docker-entrypoint.sh: Looking for shell scripts in /docker-entrypoint.d/

/docker-entrypoint.sh: Launching /docker-entrypoint.d/10-listen-on-ipv6-by-default.sh

10-listen-on-ipv6-by-default.sh: info: /etc/nginx/conf.d/default.conf is not a file or does not exist

/docker-entrypoint.sh: Sourcing /docker-entrypoint.d/15-local-resolvers.envsh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/20-envsubst-on-templates.sh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/30-tune-worker-processes.sh

/docker-entrypoint.sh: Configuration complete; ready for start up

2026/02/07 13:30:02 [notice] 1#1: using the "epoll" event method

2026/02/07 13:30:02 [notice] 1#1: nginx/1.28.0

2026/02/07 13:30:02 [notice] 1#1: built by gcc 14.2.0 (Alpine 14.2.0)

2026/02/07 13:30:02 [notice] 1#1: OS: Linux 6.12.0-55.39.1.el10_0.aarch64

2026/02/07 13:30:02 [notice] 1#1: getrlimit(RLIMIT_NOFILE): 65535:65535

2026/02/07 13:30:02 [notice] 1#1: start worker processes

2026/02/07 13:30:02 [notice] 1#1: start worker process 20

2026/02/07 13:30:02 [alert] 20#20: setrlimit(RLIMIT_NOFILE, 130048) failed (1: Operation not permitted)

2026/02/07 13:30:02 [notice] 1#1: start worker process 21

2026/02/07 13:30:02 [alert] 21#21: setrlimit(RLIMIT_NOFILE, 130048) failed (1: Operation not permitted)

# [k8s-node4] 하지만 백엔드 대상 서버가 나머지 2대가 있으니 아래 요청 처리 정상!

root@k8s-node4:~# while true; do curl -sk https://127.0.0.1:6443/version | grep gitVersion ; date; sleep 1; echo ; done

"gitVersion": "v1.32.9",

Sat Feb 7 10:50:54 PM KST 2026

"gitVersion": "v1.32.9",

Sat Feb 7 10:50:55 PM KST 2026

"gitVersion": "v1.32.9",

Sat Feb 7 10:50:56 PM KST 2026

# [admin-lb] 아래 자격증명 서버 정보 수정 필요

root@admin-lb:~# while true; do kubectl get node ; echo ; curl -sk https://192.168.10.12:6443/version | grep gitVersion ; sleep 1; echo ; done

Unable to connect to the server: dial tcp 192.168.10.11:6443: connect: no route to host

"gitVersion": "v1.32.9",

root@admin-lb:~# sed -i 's/192.168.10.11/192.168.10.12/g' /root/.kube/config

root@admin-lb:~# while true; do kubectl get node ; echo ; curl -sk https://192.168.10.12:6443/version | grep gitVersion ; sleep 1; echo ; done

NAME STATUS ROLES AGE VERSION

k8s-node1 NotReady control-plane 164m v1.32.9

k8s-node2 Ready control-plane 164m v1.32.9

k8s-node3 Ready control-plane 164m v1.32.9

k8s-node4 Ready <none> 163m v1.32.9

"gitVersion": "v1.32.9",

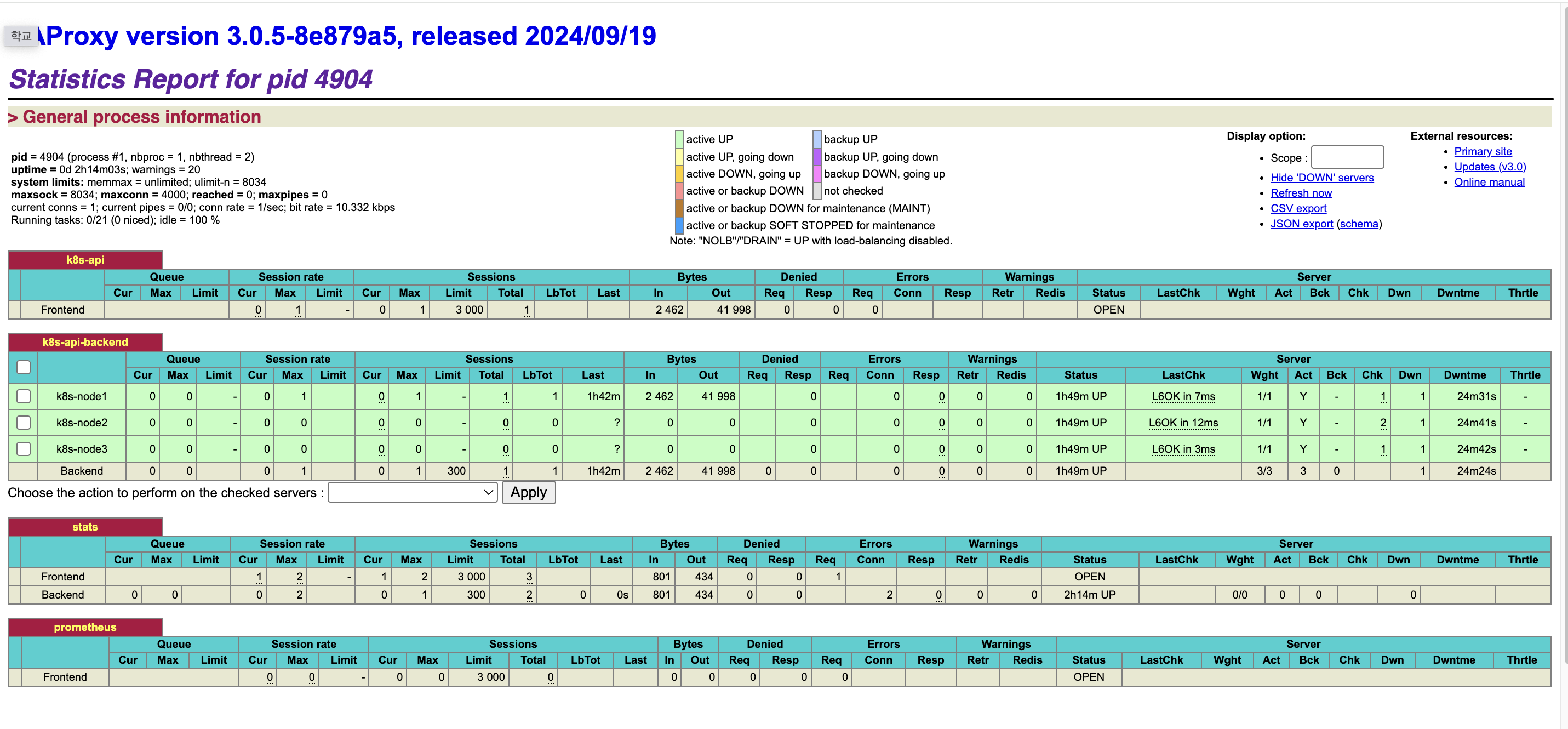

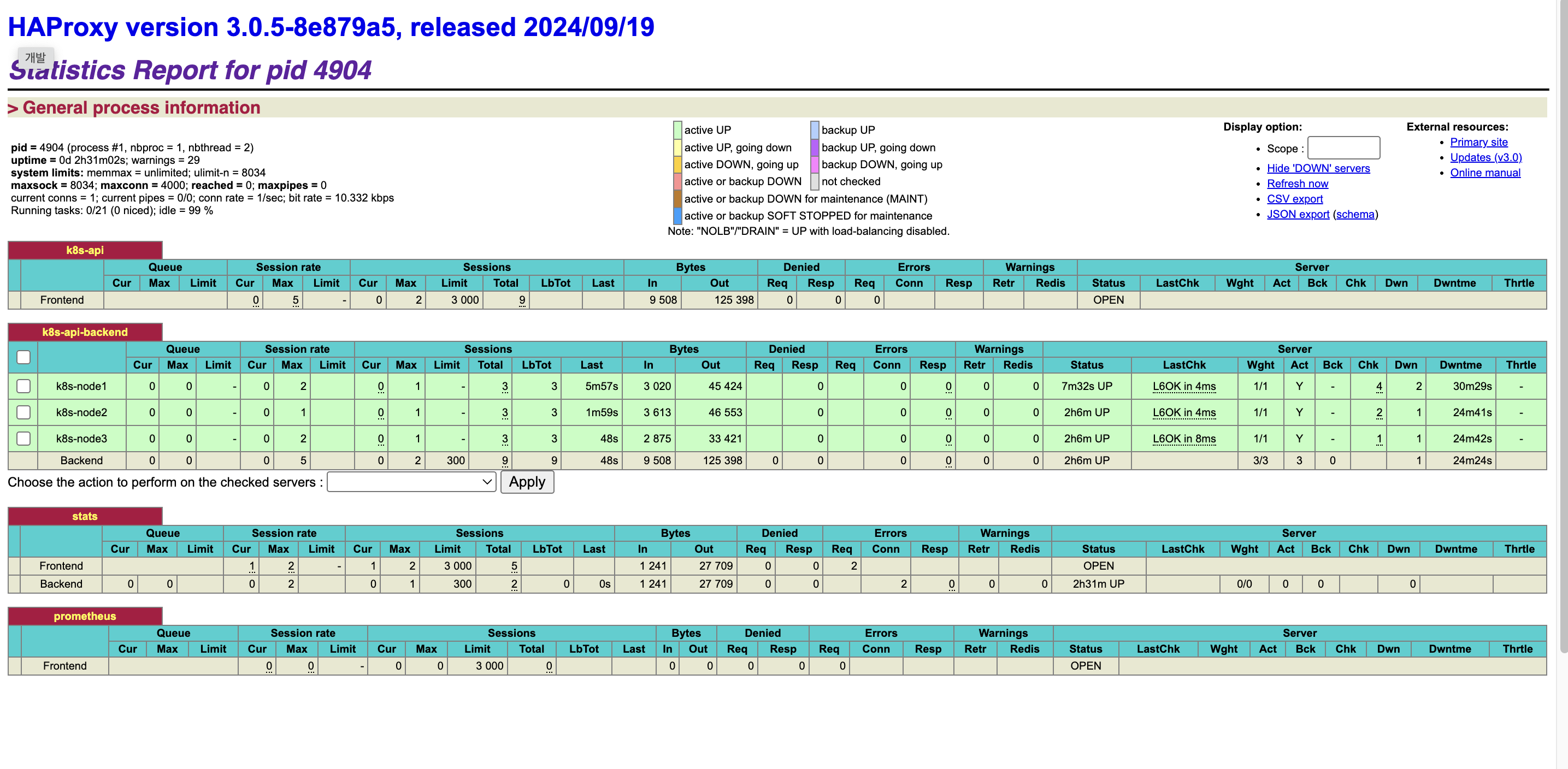

External LB → HA 컨트플 플레인 노드(3대) : k8s apiserver 호출 설정

root@admin-lb:~/kubespray# curl -sk https://192.168.10.10:6443/version | grep gitVersion

"gitVersion": "v1.32.9",

root@admin-lb:~/kubespray# sed -i 's/192.168.10.12/192.168.10.10/g' /root/.kube/config

# 인증서 SAN list 확인

root@admin-lb:~/kubespray# kubectl get node

E0207 22:57:29.198183 17989 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.10.10:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.10.13, 192.168.10.11, 127.0.0.1, ::1, 192.168.10.12, 10.0.2.15, fd17:625c:f037:2:a00:27ff:fe90:eaeb, not 192.168.10.10"

E0207 22:57:29.207284 17989 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.10.10:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.10.11, 127.0.0.1, ::1, 192.168.10.12, 192.168.10.13, 10.0.2.15, fd17:625c:f037:2:a00:27ff:fe90:eaeb, not 192.168.10.10"

E0207 22:57:29.217142 17989 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.10.10:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.10.12, 192.168.10.11, 127.0.0.1, ::1, 192.168.10.13, 10.0.2.15, fd17:625c:f037:2:a00:27ff:fe90:eaeb, not 192.168.10.10"

E0207 22:57:29.226689 17989 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.10.10:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.10.13, 192.168.10.11, 127.0.0.1, ::1, 192.168.10.12, 10.0.2.15, fd17:625c:f037:2:a00:27ff:fe90:eaeb, not 192.168.10.10"

E0207 22:57:29.247206 17989 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.10.10:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.10.11, 127.0.0.1, ::1, 192.168.10.12, 192.168.10.13, 10.0.2.15, fd17:625c:f037:2:a00:27ff:fe90:eaeb, not 192.168.10.10"

Unable to connect to the server: tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.10.11, 127.0.0.1, ::1, 192.168.10.12, 192.168.10.13, 10.0.2.15, fd17:625c:f037:2:a00:27ff:fe90:eaeb, not 192.168.10.10

# 인증서에 SAN 정보 확인

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/ssl/apiserver.crt | openssl x509 -text -noout

Certificate:

Data:

Version: 3 (0x2)

Serial Number: 6668381285306049874 (0x5c8ad972c987a552)

Signature Algorithm: sha256WithRSAEncryption

Issuer: CN=kubernetes

Validity

Not Before: Feb 7 11:03:15 2026 GMT

Not After : Feb 7 11:08:15 2027 GMT

Subject: CN=kube-apiserver

Subject Public Key Info:

Public Key Algorithm: rsaEncryption

Public-Key: (2048 bit)

Modulus:

00:ae:4b:04:77:da:b0:1e:83:48:3d:af:6f:9f:54:

58:51:be:f5:6b:cd:5f:ce:0d:7d:b4:41:c2:99:96:

1b:71:07:88:54:0b:f7:0e:b3:96:62:3b:44:4b:0b:

43:95:8a:83:3a:23:66:e5:59:15:93:38:c1:99:a2:

7a:1a:13:16:9d:43:95:20:aa:6e:7f:8d:3f:67:32:

2d:bf:cd:88:9e:96:7b:d4:42:83:87:b8:3d:6f:08:

72:d9:9c:00:ab:b0:43:04:90:d8:f6:6d:59:04:14:

d9:93:49:31:99:0e:d4:49:70:9f:2b:aa:6d:fb:2f:

b3:6f:85:e2:73:66:2f:03:8e:89:8b:d4:c0:63:68:

48:5b:a9:db:e7:18:d3:92:ea:39:47:1c:33:42:ff:

c7:f3:f4:6e:9b:3b:18:01:35:8c:a7:f2:47:cb:20:

80:17:30:4e:e4:79:a6:c6:d2:bb:5c:37:0e:e8:4a:

f9:c0:05:70:f6:ed:f6:cd:9e:22:7d:ed:e4:28:05:

4f:dc:10:8c:6a:41:ee:25:19:42:de:ed:24:65:68:

f1:4a:0e:f9:b7:90:c0:27:e7:fb:9d:99:d7:d6:a1:

ce:cb:08:7a:52:df:8d:fd:6a:89:ae:ff:4e:38:d7:

e4:96:00:1c:91:79:f8:7c:c3:3b:5e:e5:71:06:89:

80:eb

Exponent: 65537 (0x10001)

X509v3 extensions:

X509v3 Key Usage: critical

Digital Signature, Key Encipherment

X509v3 Extended Key Usage:

TLS Web Server Authentication

X509v3 Basic Constraints: critical

CA:FALSE

X509v3 Authority Key Identifier:

C3:2F:41:17:C7:0A:99:FA:49:BC:06:96:EC:3B:FA:E2:02:B9:89:E7

X509v3 Subject Alternative Name:

DNS:k8s-node1, DNS:k8s-node2, DNS:k8s-node3, DNS:kubernetes, DNS:kubernetes.default, DNS:kubernetes.default.svc, DNS:kubernetes.default.svc.cluster.local, DNS:lb-apiserver.kubernetes.local, DNS:localhost, IP Address:10.233.0.1, IP Address:192.168.10.11, IP Address:127.0.0.1, IP Address:0:0:0:0:0:0:0:1, IP Address:192.168.10.12, IP Address:192.168.10.13, IP Address:10.0.2.15, IP Address:FD17:625C:F037:2:A00:27FF:FE90:EAEB

Signature Algorithm: sha256WithRSAEncryption

Signature Value:

43:8b:5d:07:52:cc:da:f5:70:45:14:26:7d:60:42:f0:39:10:

62:e5:fa:35:7f:d5:1c:61:b3:48:68:f0:3b:44:a7:0f:85:a3:

9a:fb:c3:68:35:80:68:f0:19:d3:05:0a:91:b3:dd:c1:7d:2c:

73:36:04:05:9a:52:ac:93:ff:78:fc:56:88:0f:da:4f:4f:55:

93:9e:25:59:d2:22:5b:92:e5:53:2c:7a:f8:96:0e:ee:86:0c:

62:25:e5:1f:b2:47:b3:f6:94:50:f5:a8:5e:c7:ee:e3:1f:98:

8c:9b:91:a4:6a:25:76:ed:5f:96:b4:97:cf:44:e2:1e:15:7e:

69:ee:b7:ec:02:90:1d:a6:f3:6c:96:87:29:e0:41:10:63:44:

01:ee:d6:ed:f0:f5:7b:f2:7b:dc:56:2f:46:eb:8f:11:bb:86:

20:fb:4e:07:2c:d3:eb:2e:4e:fb:e8:63:7f:d2:7d:79:de:d8:

7c:de:01:c0:32:42:db:07:42:72:a8:e7:85:09:9e:30:1a:31:

1d:55:40:a5:ad:ef:e9:bd:f8:d6:fa:76:34:29:9b:dd:34:23:

cd:a0:98:bc:9d:32:09:1d:b5:b5:88:50:a8:b1:0d:aa:a4:a4:

a3:97:b2:2c:c2:36:39:65:4e:f0:df:e8:b6:39:77:19:4f:dd:

8e:1f:f8:24

root@admin-lb:~/kubespray# ssh k8s-node1 kubectl get cm -n kube-system kubeadm-config -o yaml

apiVersion: v1

data:

ClusterConfiguration: |

apiServer:

certSANs:

- kubernetes

- kubernetes.default

- kubernetes.default.svc

- kubernetes.default.svc.cluster.local

- 10.233.0.1

- localhost

- 127.0.0.1

- ::1

- k8s-node1

- k8s-node2

- k8s-node3

- lb-apiserver.kubernetes.local

- 192.168.10.11

- 192.168.10.12

- 192.168.10.13

- 10.0.2.15

- fd17:625c:f037:2:a00:27ff:fe90:eaeb

extraArgs:

- name: etcd-compaction-interval

value: 5m0s

- name: default-not-ready-toleration-seconds

value: "300"

- name: default-unreachable-toleration-seconds

value: "300"

- name: anonymous-auth

value: "True"

- name: authorization-mode

value: Node,RBAC

- name: bind-address

value: '::'

- name: apiserver-count

value: "3"

- name: endpoint-reconciler-type

value: lease

- name: service-node-port-range

value: 30000-32767

- name: service-cluster-ip-range

value: 10.233.0.0/18

- name: kubelet-preferred-address-types

value: InternalDNS,InternalIP,Hostname,ExternalDNS,ExternalIP

- name: profiling

value: "False"

- name: request-timeout

value: 1m0s

- name: enable-aggregator-routing

value: "False"

- name: service-account-lookup

value: "True"

- name: storage-backend

value: etcd3

- name: allow-privileged

value: "true"

- name: event-ttl

value: 1h0m0s

extraVolumes:

- hostPath: /etc/pki/tls

mountPath: /etc/pki/tls

name: etc-pki-tls

readOnly: true

- hostPath: /etc/pki/ca-trust

mountPath: /etc/pki/ca-trust

name: etc-pki-ca-trust

readOnly: true

apiVersion: kubeadm.k8s.io/v1beta4

caCertificateValidityPeriod: 87600h0m0s

certificateValidityPeriod: 8760h0m0s

certificatesDir: /etc/kubernetes/ssl

clusterName: cluster.local

controlPlaneEndpoint: 192.168.10.11:6443

controllerManager:

extraArgs:

- name: node-monitor-grace-period

value: 40s

- name: node-monitor-period

value: 5s

- name: cluster-cidr

value: 10.233.64.0/18

- name: service-cluster-ip-range

value: 10.233.0.0/18

- name: node-cidr-mask-size-ipv4

value: "24"

- name: profiling

value: "False"

- name: terminated-pod-gc-threshold

value: "12500"

- name: bind-address

value: '::'

- name: leader-elect-lease-duration

value: 15s

- name: leader-elect-renew-deadline

value: 10s

- name: configure-cloud-routes

value: "false"

dns:

disabled: true

imageRepository: registry.k8s.io/coredns

imageTag: v1.11.3

encryptionAlgorithm: RSA-2048

etcd:

external:

caFile: /etc/ssl/etcd/ssl/ca.pem

certFile: /etc/ssl/etcd/ssl/node-k8s-node1.pem

endpoints:

- https://192.168.10.11:2379

- https://192.168.10.12:2379

- https://192.168.10.13:2379

keyFile: /etc/ssl/etcd/ssl/node-k8s-node1-key.pem

imageRepository: registry.k8s.io

kind: ClusterConfiguration

kubernetesVersion: v1.32.9

networking:

dnsDomain: cluster.local

podSubnet: 10.233.64.0/18

serviceSubnet: 10.233.0.0/18

proxy: {}

scheduler:

extraArgs:

- name: bind-address

value: '::'

- name: config

value: /etc/kubernetes/kubescheduler-config.yaml

- name: profiling

value: "False"

extraVolumes:

- hostPath: /etc/kubernetes/kubescheduler-config.yaml

mountPath: /etc/kubernetes/kubescheduler-config.yaml

name: kubescheduler-config

readOnly: true

kind: ConfigMap

metadata:

creationTimestamp: "2026-02-07T11:08:23Z"

name: kubeadm-config

namespace: kube-system

resourceVersion: "204"

uid: 9ca208bd-47df-4fbf-b4b1-2bedf9915470

# 인증서 SAN 에 'IP, Domain' 추가

root@admin-lb:~/kubespray# echo "supplementary_addresses_in_ssl_keys: [192.168.10.10, k8s-api-srv.admin-lb.com]" >> inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

root@admin-lb:~/kubespray# grep "^[^#]" inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

# (신규터미널) 모니터링

PLAY RECAP **********************************************************************************************************************************

k8s-node1 : ok=65 changed=11 unreachable=0 failed=0 skipped=62 rescued=0 ignored=0

k8s-node2 : ok=44 changed=9 unreachable=0 failed=0 skipped=57 rescued=0 ignored=0

k8s-node3 : ok=44 changed=9 unreachable=0 failed=0 skipped=57 rescued=0 ignored=0

Saturday 07 February 2026 23:01:09 +0900 (0:00:00.077) 0:00:43.080 *****

===============================================================================

kubernetes/control-plane : Kubeadm | Check apiserver.crt SAN hosts ------------------------------------------------------------------- 3.42s

kubernetes/control-plane : Kubeadm | Check apiserver.crt SAN IPs --------------------------------------------------------------------- 3.11s

kubernetes/control-plane : Backup old certs and keys --------------------------------------------------------------------------------- 2.42s

Gather minimal facts ----------------------------------------------------------------------------------------------------------------- 2.33s

kubernetes/preinstall : Create other directories of root owner ----------------------------------------------------------------------- 1.80s

kubernetes/control-plane : Backup old confs ------------------------------------------------------------------------------------------ 1.73s

kubernetes/control-plane : Update server field in component kubeconfigs -------------------------------------------------------------- 1.68s

kubernetes/control-plane : Install | Copy kubectl binary from download dir ----------------------------------------------------------- 1.63s

win_nodes/kubernetes_patch : debug --------------------------------------------------------------------------------------------------- 1.62s

kubernetes/control-plane : Kubeadm | Create kubeadm config --------------------------------------------------------------------------- 1.47s

kubernetes/preinstall : Create kubernetes directories -------------------------------------------------------------------------------- 1.39s

Gather necessary facts (hardware) ---------------------------------------------------------------------------------------------------- 1.32s

kubernetes/control-plane : Install script to renew K8S control plane certificates ---------------------------------------------------- 1.21s

kubernetes/control-plane : Fixup kubelet client cert rotation 1/2 -------------------------------------------------------------------- 0.95s

kubernetes/control-plane : Create kube-scheduler config ------------------------------------------------------------------------------ 0.89s

kubernetes/control-plane : Renew K8S control plane certificates monthly 2/2 ---------------------------------------------------------- 0.77s

kubernetes/control-plane : Kubeadm | regenerate apiserver cert 2/2 ------------------------------------------------------------------- 0.70s

kubernetes/control-plane : Kubeadm | regenerate apiserver cert 1/2 ------------------------------------------------------------------- 0.66s

kubernetes/control-plane : Install kubectl bash completion --------------------------------------------------------------------------- 0.62s

kubernetes/control-plane : Fixup kubelet client cert rotation 2/2 -------------------------------------------------------------------- 0.57s

# 192.168.10.10 엔드포인트 요청 성공!

root@admin-lb:~/kubespray# kubectl get node -v=6

I0207 23:01:27.019907 18704 loader.go:402] Config loaded from file: /root/.kube/config

I0207 23:01:27.021030 18704 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0207 23:01:27.021056 18704 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0207 23:01:27.021075 18704 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0207 23:01:27.021080 18704 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0207 23:01:27.034217 18704 round_trippers.go:560] GET https://192.168.10.10:6443/api?timeout=32s 200 OK in 12 milliseconds

I0207 23:01:27.045862 18704 round_trippers.go:560] GET https://192.168.10.10:6443/apis?timeout=32s 200 OK in 6 milliseconds

I0207 23:01:27.092009 18704 round_trippers.go:560] GET https://192.168.10.10:6443/api/v1/nodes?limit=500 200 OK in 17 milliseconds

NAME STATUS ROLES AGE VERSION

k8s-node1 Ready control-plane 172m v1.32.9

k8s-node2 Ready control-plane 172m v1.32.9

k8s-node3 Ready control-plane 172m v1.32.9

# ip, domain 둘 다 확인

root@admin-lb:~/kubespray# sed -i 's/192.168.10.10/k8s-api-srv.admin-lb.com/g' /root/.kube/config

# 추가 확인

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/ssl/apiserver.crt | openssl x509 -text -noout

Certificate:

Data:

Version: 3 (0x2)

Serial Number: 8107676958263781548 (0x7084413cbaeea8ac)

Signature Algorithm: sha256WithRSAEncryption

Issuer: CN=kubernetes

Validity

Not Before: Feb 7 14:02:16 2026 GMT

Not After : Feb 7 14:07:16 2027 GMT

Subject: CN=kube-apiserver

Subject Public Key Info:

Public Key Algorithm: rsaEncryption

Public-Key: (2048 bit)

Modulus:

00:e9:f2:37:35:79:e7:b5:57:29:21:ef:21:c5:69:

2d:b3:44:e3:91:0c:e5:c7:d3:f5:ec:05:8d:c7:53:

07:12:33:fb:54:38:a9:54:4e:28:de:e5:aa:ff:7f:

2f:d7:90:05:85:b4:3c:15:4f:fd:52:ea:d4:92:83:

65:72:70:88:a4:eb:2b:04:c7:b0:bb:06:24:86:c4:

e4:42:02:8f:bf:64:b8:13:2c:60:44:c2:57:bf:df:

c0:dc:7e:f3:3f:e5:03:d2:73:83:fd:f3:6e:7f:95:

d5:0c:11:da:a8:ec:3d:f2:f0:ee:49:6f:83:36:34:

b0:3a:1b:38:84:be:48:2b:2a:6c:76:db:1f:9c:8a:

70:92:f2:1e:b4:89:b3:90:f3:a6:86:80:ed:ee:ef:

2d:5f:d4:f3:20:19:ec:c9:6c:05:76:1e:f4:81:56:

37:b1:51:ac:5e:4b:f2:58:15:48:93:c2:13:94:7a:

5d:e7:b4:f7:54:d7:46:3e:72:bc:a6:91:ae:f2:36:

ab:ba:f5:e6:12:00:31:1e:0d:8d:d4:a4:1c:9d:53:

38:8b:e8:d0:3d:03:17:90:5b:4c:73:b2:b3:7a:c9:

bf:2d:dc:3a:6d:f6:e7:97:c4:f8:ed:7e:59:1d:31:

47:37:1d:ef:33:ee:76:bc:95:9f:32:c2:cf:27:fd:

03:db

Exponent: 65537 (0x10001)

X509v3 extensions:

X509v3 Key Usage: critical

Digital Signature, Key Encipherment

X509v3 Extended Key Usage:

TLS Web Server Authentication

X509v3 Basic Constraints: critical

CA:FALSE

X509v3 Authority Key Identifier:

C3:2F:41:17:C7:0A:99:FA:49:BC:06:96:EC:3B:FA:E2:02:B9:89:E7

X509v3 Subject Alternative Name:

DNS:k8s-api-srv.admin-lb.com, DNS:k8s-node1, DNS:k8s-node2, DNS:k8s-node3, DNS:kubernetes, DNS:kubernetes.default, DNS:kubernetes.default.svc, DNS:kubernetes.default.svc.cluster.local, DNS:lb-apiserver.kubernetes.local, DNS:localhost, IP Address:10.233.0.1, IP Address:192.168.10.11, IP Address:127.0.0.1, IP Address:0:0:0:0:0:0:0:1, IP Address:192.168.10.10, IP Address:192.168.10.12, IP Address:192.168.10.13, IP Address:10.0.2.15, IP Address:FD17:625C:F037:2:A00:27FF:FE90:EAEB

Signature Algorithm: sha256WithRSAEncryption

Signature Value:

64:f8:3e:f3:ee:cb:d9:55:bc:a2:cc:cb:0b:98:a4:32:21:9f:

64:0d:63:b9:8e:29:59:5a:47:da:c9:55:84:67:f9:ba:42:1c:

61:b1:57:bb:5c:6f:f1:9a:57:f9:9a:a6:9e:e4:ce:01:65:6f:

22:a4:a6:78:9b:92:67:a0:52:51:62:b8:f5:2f:50:87:29:be:

e1:07:2c:47:40:3e:9f:a2:af:f9:03:f8:2a:ed:cd:38:1e:c3:

b4:7c:af:3b:a1:e0:d9:3b:b2:d7:87:b4:6f:fe:7a:b1:4a:fb:

a8:07:72:63:c9:5f:65:42:e0:4b:fc:fd:58:d1:81:e5:90:2d:

18:28:39:18:f6:4b:22:b4:f3:d4:59:e7:d7:75:b0:83:d1:5e:

86:0e:28:67:1d:83:73:cd:7a:53:49:fb:de:91:f0:3e:55:9a:

2f:25:2a:72:58:44:cd:9e:d0:6e:c9:05:04:39:8c:c2:f2:0d:

b9:a7:57:2f:36:a2:68:54:5a:30:65:2c:06:c0:2e:3e:d1:56:

7e:61:8c:4f:bf:29:1a:59:ef:9a:c5:16:0f:6d:9a:21:91:89:

34:52:da:b7:b6:de:9d:7d:4e:d8:c5:91:cb:ac:55:6e:4c:b7:

32:d6:ed:34:e0:eb:60:6d:b5:75:40:b8:56:fa:ec:de:ea:e1:

95:a7:6c:df

# 해당 cm은 최초 설치 후 자동 업데이트 X, 업그레이드에 활용된다고 하니, 위 처럼 kubeadm config 변경 시 직접 cm도 같이 변경해두자.

k8s-node4 Ready <none> 172m v1.32.9

노드 관리

노드 추가 k8s-node5

# 노드 추가 k8s-node5

# inventory.ini 수정

root@admin-lb:~/kubespray# cat << EOF > /root/kubespray/inventory/mycluster/inventory.ini

> [kube_control_plane]

k8s-node1 ansible_host=192.168.10.11 ip=192.168.10.11 etcd_member_name=etcd1

k8s-node2 ansible_host=192.168.10.12 ip=192.168.10.12 etcd_member_name=etcd2

k8s-node3 ansible_host=192.168.10.13 ip=192.168.10.13 etcd_member_name=etcd3

[etcd:children]

kube_control_plane

[kube_node]

k8s-node4 ansible_host=192.168.10.14 ip=192.168.10.14

k8s-node5 ansible_host=192.168.10.15 ip=192.168.10.15

EOF

root@admin-lb:~/kubespray# ansible-inventory -i /root/kubespray/inventory/mycluster/inventory.ini --graph

@all:

|--@ungrouped:

|--@etcd:

| |--@kube_control_plane:

| | |--k8s-node1

| | |--k8s-node2

| | |--k8s-node3

|--@kube_node:

| |--k8s-node4

| |--k8s-node5

# ansible 연결 확인

root@admin-lb:~/kubespray# ansible -i inventory/mycluster/inventory.ini k8s-node5 -m ping

[WARNING]: Platform linux on host k8s-node5 is using the discovered Python interpreter at /usr/bin/python3.12, but future installation of

another Python interpreter could change the meaning of that path. See https://docs.ansible.com/ansible-

core/2.17/reference_appendices/interpreter_discovery.html for more information.

k8s-node5 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3.12"

},

"changed": false,

"ping": "pong"

}

# 워커노드 추가

root@admin-lb:~/kubespray# ANSIBLE_FORCE_COLOR=true ansible-playbook -i inventory/mycluster/inventory.ini -v scale.yml --limit=k8s-node5 -e kube_version="1.32.9" | tee kubespray_add_worker_node.log

# 모니터링

root@admin-lb:~/kubespray# ANSIBLE_FORCE_COLOR=true ansible-playbook -i inventory/mycluster/inventory.ini -v scale.yml --limit=k8s-node5 -e kube_version="1.32.9" | tee kubespray_add_worker_node.log

# 확인

Every 2.0s: kubectl get node admin-lb: Sat Feb 7 23:19:31 2026

NAME STATUS ROLES AGE VERSION

k8s-node1 Ready control-plane 3h11m v1.32.9

k8s-node2 Ready control-plane 3h10m v1.32.9

k8s-node3 Ready control-plane 3h10m v1.32.9

k8s-node4 Ready <none> 3h10m v1.32.9

k8s-node5 Ready <none> <invalid> v1.32.9

root@admin-lb:~/kubespray# kubectl get node -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-node1 Ready control-plane 3h17m v1.32.9 192.168.10.11 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node2 Ready control-plane 3h17m v1.32.9 192.168.10.12 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node3 Ready control-plane 3h17m v1.32.9 192.168.10.13 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node4 Ready <none> 3h16m v1.32.9 192.168.10.14 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node5 Ready <none> 2m2s v1.32.9 192.168.10.15 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

# 변경 정보 확인

root@admin-lb:~/kubespray# ssh k8s-node5 tree /etc/kubernetes

/etc/kubernetes

├── kubeadm-client.conf

├── kubelet.conf

├── kubelet.conf.15422.2026-02-07@23:18:51~

├── kubelet-config.yaml

├── kubelet.env

├── manifests

│ └── nginx-proxy.yml

├── pki -> /etc/kubernetes/ssl

└── ssl

└── ca.crt

4 directories, 7 files

root@admin-lb:~/kubespray# ssh k8s-node5 tree /var/lib/kubelet

/var/lib/kubelet

├── checkpoints

├── config.yaml

├── cpu_manager_state

├── device-plugins

│ └── kubelet.sock

├── kubeadm-flags.env

├── memory_manager_state

├── pki

│ ├── kubelet-client-2026-02-07-23-18-50.pem

│ ├── kubelet-client-current.pem -> /var/lib/kubelet/pki/kubelet-client-2026-02-07-23-18-50.pem

│ ├── kubelet.crt

│ └── kubelet.key

├── plugins

├── plugins_registry

├── pod-resources

│ └── kubelet.sock

└── pods

├── 577f6a66-08fa-4cd2-ae1e-2f104c1915cd

│ ├── containers

│ │ ├── install-cni

│ │ │ └── 0cb09e5c

│ │ ├── install-cni-plugin

│ │ │ └── 481866fe

│ │ └── kube-flannel

│ │ ├── 7001cd69

│ │ └── bd84bb93

│ ├── etc-hosts

│ ├── plugins

│ │ └── kubernetes.io~empty-dir

│ │ ├── wrapped_flannel-cfg

│ │ │ └── ready

│ │ └── wrapped_kube-api-access-v7jkz

│ │ └── ready

│ └── volumes

│ ├── kubernetes.io~configmap

│ │ └── flannel-cfg

│ │ ├── cni-conf.json -> ..data/cni-conf.json

│ │ └── net-conf.json -> ..data/net-conf.json

│ └── kubernetes.io~projected

│ └── kube-api-access-v7jkz

│ ├── ca.crt -> ..data/ca.crt

│ ├── namespace -> ..data/namespace

│ └── token -> ..data/token

├── ec9c3b89-13fd-42fa-b798-a313ac3f4f05

│ ├── containers

│ │ └── kube-proxy

│ │ └── 94f3c4e8

│ ├── etc-hosts

│ ├── plugins

│ │ └── kubernetes.io~empty-dir

│ │ ├── wrapped_kube-api-access-6lwg5

│ │ │ └── ready

│ │ └── wrapped_kube-proxy

│ │ └── ready

│ └── volumes

│ ├── kubernetes.io~configmap

│ │ └── kube-proxy

│ │ ├── config.conf -> ..data/config.conf

│ │ └── kubeconfig.conf -> ..data/kubeconfig.conf

│ └── kubernetes.io~projected

│ └── kube-api-access-6lwg5

│ ├── ca.crt -> ..data/ca.crt

│ ├── namespace -> ..data/namespace

│ └── token -> ..data/token

└── f8f0790f0f374d27632f9ad8c3ae4aaf

├── containers

│ └── nginx-proxy

│ └── f0c0f90b

├── etc-hosts

├── plugins

└── volumes

39 directories, 33 files

root@admin-lb:~/kubespray# ssh k8s-node5 pstree -a

systemd --switched-root --system --deserialize=46 no_timer_check

|-NetworkManager --no-daemon

| `-3*[{NetworkManager}]

|-VBoxService --pidfile /var/run/vboxadd-service.sh

| `-8*[{VBoxService}]

|-agetty -o -- \\u --noreset --noclear - linux

|-atd -f

|-auditd

| |-sedispatch

| `-2*[{auditd}]

|-chronyd -F 2

|-containerd

| `-10*[{containerd}]

|-containerd-shim -namespace k8s.io -id 635b3e5418716e220d6719eb0af617ce7c85d011b535a2c09b8cbde454440e5e-addre

| |-nginx

| | |-nginx

| | | `-32*[{nginx}]

| | `-nginx

| | `-32*[{nginx}]

| |-pause

| `-11*[{containerd-shim}]

|-containerd-shim -namespace k8s.io -id 00b1d2366baa0cbb7bb001f589a64d4b293133143e4e44329538c97e27128697-addre

| |-flanneld --ip-masq --kube-subnet-mgr --iface=enp0s9

| | `-9*[{flanneld}]

| |-pause

| `-12*[{containerd-shim}]

|-containerd-shim -namespace k8s.io -id ad97b97df62815a13dce7ddf8a8f25023c554cf5c95936ce6b2a1f04aabceb59-addre

| |-kube-proxy --config=/var/lib/kube-proxy/config.conf --hostname-override=k8s-node5

| | `-6*[{kube-proxy}]

| |-pause

| `-11*[{containerd-shim}]

|-crond -n

|-dbus-broker-lau --scope system --audit

| `-dbus-broker --log 4 --controller 9 --machine-id d52e598aa3894a2bbed8c46cd78feb65 --max-bytes 536870912 --max-fds 4096 ...

|-fwupd

| `-5*[{fwupd}]

|-gpg-agent --homedir /var/lib/fwupd/gnupg --use-standard-socket --daemon

| |-scdaemon --multi-server --homedir /var/lib/fwupd/gnupg

| | `-{scdaemon}

| `-{gpg-agent}

|-gssproxy -i

| `-5*[{gssproxy}]

|-irqbalance

| `-{irqbalance}

|-kubelet --v=2 --node-ip=192.168.10.15 --hostname-override=k8s-node5--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-

| `-12*[{kubelet}]

|-lsmd -d

|-polkitd --no-debug --log-level=err

| `-3*[{polkitd}]

|-rpcbind -w -f

|-rsyslogd -n

| `-2*[{rsyslogd}]

|-sshd

| |-sshd-session

| | `-sshd-session

| `-sshd-session

| `-sshd-session

| `-pstree -a

|-systemd --user

| `-(sd-pam)

|-systemd-journal

|-systemd-logind

|-systemd-udevd

|-systemd-userdbd

| |-systemd-userwor

| |-systemd-userwor

| `-systemd-userwor

|-tuned -Es /usr/sbin/tuned -l -P

| `-3*[{tuned}]

`-udisksd

`-6*[{udisksd}]

# 샘플 파드 분배

root@admin-lb:~/kubespray# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

webpod-697b545f57-q22tz 1/1 Running 0 41m 10.233.67.6 k8s-node4 <none> <none>

webpod-697b545f57-srd2b 1/1 Running 0 41m 10.233.67.5 k8s-node4 <none> <none>

root@admin-lb:~/kubespray# kubectl scale deployment webpod --replicas 1

deployment.apps/webpod scaled

root@admin-lb:~/kubespray# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

webpod-697b545f57-srd2b 1/1 Running 0 46m 10.233.67.5 k8s-node4 <none> <none>

root@admin-lb:~/kubespray# kubectl scale deployment webpod --replicas 2

deployment.apps/webpod scaled

root@admin-lb:~/kubespray# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

webpod-697b545f57-gw28s 0/1 ContainerCreating 0 4m50s <none> k8s-node5 <none> <none>

webpod-697b545f57-srd2b 1/1 Running 0 46m 10.233.67.5 k8s-node4 <none> <none>

root@admin-lb:~/kubespray#

노드 삭제

# webpod deployment 에 pdb 설정 : 해당 정책은 항상 최소 2개의 Pod가 Ready 상태여야 함 , drain / eviction 시 단 하나의 Pod도 축출 불가

root@admin-lb:~/kubespray# kubectl scale deployment webpod --replicas 1

deployment.apps/webpod scaled

root@admin-lb:~/kubespray# kubectl scale deployment webpod --replicas 2

deployment.apps/webpod scaled

root@admin-lb:~/kubespray# cat <<EOF | kubectl apply -f -

apiVersion: policy/v1

kind: PodDisruptionBudget

metadata:

name: webpod

namespace: default

spec:

maxUnavailable: 0

selector:

matchLabels:

app: webpod

EOF

poddisruptionbudget.policy/webpod created

root@admin-lb:~/kubespray# kubectl get pdb

NAME MIN AVAILABLE MAX UNAVAILABLE ALLOWED DISRUPTIONS AGE

webpod N/A 0 0 <invalid>

# 삭제 실패

root@admin-lb:~/kubespray# ansible-playbook -i inventory/mycluster/inventory.ini -v remove-node.yml --list-tags

Using /root/kubespray/ansible.cfg as config file

[WARNING]: Could not match supplied host pattern, ignoring: bastion

[WARNING]: Could not match supplied host pattern, ignoring: k8s_cluster

[WARNING]: Could not match supplied host pattern, ignoring: calico_rr

playbook: remove-node.yml

play #1 (localhost): Validate nodes for removal TAGS: []

TASK TAGS: []

play #2 (all): Check Ansible version TAGS: [always]

TASK TAGS: [always, check]

play #3 (all): Inventory setup and validation TAGS: [always]

TASK TAGS: [always]