Cloudnet K8s Deploy 6주차 스터디를 진행하며 정리한 글입니다.

폐쇄망 환경 소개

폐쇄망에서 서비스 동작을 위해 필요한 주요 구성 요소

- NTP Server ← 보통 벤더 어플라이언스 장비(or SW/솔루션)로 기본 이중화(HA)로 구성되며, 인프라 부서/팀에서 관리

- DNS Server ← 보통 벤더 어플라이언스 장비(or SW/솔루션)로 기본 이중화(HA)로 구성되며, 인프라 부서/팀에서 관리

- Network Gateway (IGW, NATGW) : 내부망 내 다른 네트워크에 서버와 통신 및 필요 시 DMZ/외부망과 통신 시 ← 보통 네트워크 라우터/스위치 장비로 구성되며, 네트워크팀에서 관리

- Local (Mirror) YUM/DNF Repository : Linux 패키지 저장소, 예시) reposync + createrepo

- Private Container (Image) Registry : 컨테이너 이미지 저장소, 예시) Docker Registry (registry), Harbor

- Helm Artifact Repository (Server) : 헬름 차트 저장소, 예시) Chart Museum, OCI (Artifact) Registry

- Private PyPI(Python Package Index) Mirror : Python 패키지 저장소, 예시) Devpi

- Private Go Module Proxy : 사설 Go 모듈 프록시, 예시) Athens

폐쇄망 서비스 실습

일반적으로 DMZ 서버 → 내부 admin서버(파일 가져오기, kubespary 실행)이지만, PC Spec과 시간 단축을 위해 1대 서버로 구성

Network Gateway (IGW, NATGW)

[k8s-node] 네트워크 기본 설정 : enp0s8 연결 down, enp0s9 디폴트 라우팅 → 외부 통신 확인

# k8s-node1, k8s-node2에 모두동일하게 진행

# enp0s8 연결 내리기 : 실행 직후 부터 외부 통신 X

root@k8s-node1:~# nmcli connection down enp0s8

Connection 'enp0s8' successfully deactivated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/2)

# enp0s8 확인 : 할당된 IP가 제거되고, 외부 통신 라우팅 정보도 삭제됨

root@k8s-node1:~# cat /etc/NetworkManager/system-connections/enp0s8.nmconnection

[connection]

id=enp0s8

uuid=7f94e839-e070-4bfe-9330-07090381d89f

type=ethernet

autoconnect=false

interface-name=enp0s8

timestamp=1771084427

[ethernet]

[ipv4]

method=auto

[ipv6]

addr-gen-mode=eui64

method=ignore

[proxy]

root@k8s-node1:~# nmcli connection modify enp0s8 connection.autoconnect no

# 외부 통신을 위해 enp0s9 에 디폴트 라우팅 추가 : 우선순위 200 설정

root@k8s-node1:~# nmcli connection modify enp0s9 +ipv4.routes "0.0.0.0/0 192.168.10.10 200"

# 설정 적용하기

root@k8s-node1:~# nmcli connection up enp0s9

Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/6)

root@k8s-node1:~# ip route

default via 192.168.10.10 dev enp0s9 proto static metric 200

192.168.10.0/24 dev enp0s9 proto kernel scope link src 192.168.10.11 metric 100

# 외부 통신 확인

root@k8s-node1:~# ping -w 1 -W 1 8.8.8.8

PING 8.8.8.8 (8.8.8.8) 56(84) bytes of data.

--- 8.8.8.8 ping statistics ---

1 packets transmitted, 0 received, 100% packet loss, time 0ms

root@k8s-node1:~# curl www.google.com

curl: (6) Could not resolve host: www.google.com

# DNS Nameserver 정보 확인

root@k8s-node1:~# cat /etc/resolv.conf

# Generated by NetworkManager

root@k8s-node1:~# cat << EOF > /etc/resolv.conf

nameserver 168.126.63.1

nameserver 8.8.8.8

EOF

root@k8s-node1:~# curl www.google.com

[admin] 라우터/NAT 설정

# 라우팅 설정 : 이미 설정 되어 있음

root@admin:~# sysctl -w net.ipv4.ip_forward=1

net.ipv4.ip_forward = 1

root@admin:~# cat <<EOF | tee /etc/sysctl.d/99-ipforward.conf

net.ipv4.ip_forward = 1

EOF

net.ipv4.ip_forward = 1

# NAT 설정

root@admin:~# iptables -t nat -A POSTROUTING -o enp0s8 -j MASQUERADE

root@admin:~# iptables -t nat -S

-P PREROUTING ACCEPT

-P INPUT ACCEPT

-P OUTPUT ACCEPT

-P POSTROUTING ACCEPT

-A POSTROUTING -o enp0s8 -j MASQUERADE

root@admin:~# iptables -t nat -L -n -v

Chain PREROUTING (policy ACCEPT 0 packets, 0 bytes)

pkts bytes target prot opt in out source destination

Chain INPUT (policy ACCEPT 0 packets, 0 bytes)

pkts bytes target prot opt in out source destination

Chain OUTPUT (policy ACCEPT 0 packets, 0 bytes)

pkts bytes target prot opt in out source destination

Chain POSTROUTING (policy ACCEPT 1 packets, 104 bytes)

pkts bytes target prot opt in out source destination

0 0 MASQUERADE all -- * enp0s8 0.0.0.0/0 0.0.0.0/0

# 설정 이후 k8s-node1에서 다시 호출 테스트 -> 성공

root@k8s-node1:~# curl www.google.com

<!doctype html><html itemscope="" itemtype="http://schema.org/WebPage" lang="ko"><head><meta content="text/html; charset=UTF-8" http-equiv="Content-Type"><meta content="/images/branding/googleg/1x/googleg_standard_color_128dp.png"

# iptables -t nat -D POSTROUTING -o enp0s8 -j MASQUERADE (다시 iptables NAT 설정 제거로, 내부 k8s-node 인터넷 X)

root@admin:~# iptables -t nat -D POSTROUTING -o enp0s8 -j MASQUERADE

NTP Server - Client

[admin] NTP 서버 설정

# 현재 ntp 서버와 타임 동기화 설정 및 상태 확인

root@admin:~# systemctl status chronyd.service --no-pager

● chronyd.service - NTP client/server

Loaded: loaded (/usr/lib/systemd/system/chronyd.service; enabled; preset: enabled)

Active: active (running) since Sun 2026-02-15 00:11:31 KST; 52min ago

Invocation: eac0b93de6794cdda0c6339584f15332

Docs: man:chronyd(8)

man:chrony.conf(5)

Main PID: 651 (chronyd)

Tasks: 1 (limit: 12336)

Memory: 4.7M (peak: 5.2M)

CPU: 53ms

CGroup: /system.slice/chronyd.service

└─651 /usr/sbin/chronyd -F 2

Feb 15 00:11:31 localhost systemd[1]: Starting chronyd.service - NTP client/server...

Feb 15 00:11:31 localhost chronyd[651]: chronyd version 4.6.1 starting (+CMDMON +NTP +REFCLOCK +RTC +PRIVDROP +SCFILTER +SIGND +ASYNCDNS +NTS +SECHASH +IPV6 +DEBUG)

Feb 15 00:11:31 localhost chronyd[651]: Frequency -3.765 +/- 4.378 ppm read from /var/lib/chrony/drift

Feb 15 00:11:31 localhost chronyd[651]: Loaded seccomp filter (level 2)

Feb 15 00:11:31 localhost systemd[1]: Started chronyd.service - NTP client/server.

root@admin:~# grep "^[^#]" /etc/chrony.conf

pool 2.rocky.pool.ntp.org iburst

sourcedir /run/chrony-dhcp

driftfile /var/lib/chrony/drift

makestep 1.0 3

rtcsync

ntsdumpdir /var/lib/chrony

logdir /var/log/chrony

# chrony가 어떤 NTP 서버들을 알고 있고, 그중 어떤 서버를 기준으로 시간을 맞추는지를 보여줍니다.

root@admin:~# chronyc sources -v

.-- Source mode '^' = server, '=' = peer, '#' = local clock.

/ .- Source state '*' = current best, '+' = combined, '-' = not combined,

| / 'x' = may be in error, '~' = too variable, '?' = unusable.

|| .- xxxx [ yyyy ] +/- zzzz

|| Reachability register (octal) -. | xxxx = adjusted offset,

|| Log2(Polling interval) --. | | yyyy = measured offset,

|| \ | | zzzz = estimated error.

|| | | \

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

root@admin:~# dig +short 2.rocky.pool.ntp.org

;; communications error to 0.192.249.101#53: timed out

;; communications error to 0.192.249.101#53: timed out

;; communications error to 0.192.249.101#53: timed out

;; no servers could be reached

# chrony 설정

root@admin:~# cp /etc/chrony.conf /etc/chrony.bak

root@admin:~# cat << EOF > /etc/chrony.conf

# 외부 한국 공용 NTP 서버 설정

server pool.ntp.org iburst

server kr.pool.ntp.org iburst

# 내부망(192.168.10.0/24)에서 이 서버에 접속하여 시간 동기화 허용

allow 192.168.10.0/24

# 외부망이 끊겼을 때도 로컬 시계를 기준으로 내부망에 시간 제공 (선택 사항)

local stratum 10

# 로그

logdir /var/log/chrony

EOF

root@admin:~# systemctl restart chronyd.service

root@admin:~# systemctl status chronyd.service --no-pager

● chronyd.service - NTP client/server

Loaded: loaded (/usr/lib/systemd/system/chronyd.service; enabled; preset: enabled)

Active: active (running) since Sun 2026-02-15 01:06:13 KST; 4s ago

Invocation: 2a7e1f6f000d4573bb73f06a4a110466

Docs: man:chronyd(8)

man:chrony.conf(5)

Process: 5248 ExecStart=/usr/sbin/chronyd $OPTIONS (code=exited, status=0/SUCCESS)

Main PID: 5250 (chronyd)

Tasks: 1 (limit: 12336)

Memory: 908K (peak: 2.8M)

CPU: 31ms

CGroup: /system.slice/chronyd.service

└─5250 /usr/sbin/chronyd -F 2

Feb 15 01:06:13 admin systemd[1]: Starting chronyd.service - NTP client/server...

Feb 15 01:06:13 admin chronyd[5250]: chronyd version 4.6.1 starting (+CMDMON +NTP +REFCLOCK +RTC +PRIVDROP +SCFILTER +SIGND +ASYNCDNS +NTS +SECHASH +IPV6 +DEBUG)

Feb 15 01:06:13 admin chronyd[5250]: Initial frequency -3.765 ppm

Feb 15 01:06:13 admin chronyd[5250]: Loaded seccomp filter (level 2)

Feb 15 01:06:13 admin systemd[1]: Started chronyd.service - NTP client/server.

# 상태 확인

root@admin:~# timedatectl status

Local time: Sun 2026-02-15 01:06:33 KST

Universal time: Sat 2026-02-14 16:06:33 UTC

RTC time: Sat 2026-02-14 16:06:58

Time zone: Asia/Seoul (KST, +0900)

System clock synchronized: no

NTP service: active

RTC in local TZ: no

root@admin:~# chronyc sources -v

.-- Source mode '^' = server, '=' = peer, '#' = local clock.

/ .- Source state '*' = current best, '+' = combined, '-' = not combined,

| / 'x' = may be in error, '~' = too variable, '?' = unusable.

|| .- xxxx [ yyyy ] +/- zzzz

|| Reachability register (octal) -. | xxxx = adjusted offset,

|| Log2(Polling interval) --. | | yyyy = measured offset,

|| \ | | zzzz = estimated error.

|| | | \

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

[k8s-node] NTP 클라이언트 설정

# 상태 확인

root@k8s-node1:~# timedatectl status

Local time: Sun 2026-02-15 01:07:25 KST

Universal time: Sat 2026-02-14 16:07:25 UTC

RTC time: Sat 2026-02-14 16:07:50

Time zone: Asia/Seoul (KST, +0900)

System clock synchronized: no

NTP service: active

RTC in local TZ: no

root@k8s-node1:~# chronyc sources -v

.-- Source mode '^' = server, '=' = peer, '#' = local clock.

/ .- Source state '*' = current best, '+' = combined, '-' = not combined,

| / 'x' = may be in error, '~' = too variable, '?' = unusable.

|| .- xxxx [ yyyy ] +/- zzzz

|| Reachability register (octal) -. | xxxx = adjusted offset,

|| Log2(Polling interval) --. | | yyyy = measured offset,

|| \ | | zzzz = estimated error.

|| | | \

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

# chrony 설정

root@k8s-node1:~# cp /etc/chrony.conf /etc/chrony.bak

root@k8s-node1:~# cat << EOF > /etc/chrony.conf

server 192.168.10.10 iburst

logdir /var/log/chrony

EOF

root@k8s-node1:~# systemctl restart chronyd.service

root@k8s-node1:~# systemctl status chronyd.service --no-pager

● chronyd.service - NTP client/server

Loaded: loaded (/usr/lib/systemd/system/chronyd.service; enabled; preset: enabled)

Active: active (running) since Sun 2026-02-15 01:08:08 KST; 4s ago

Invocation: fa923efadd824d92aaccc8bf1e7f75bd

Docs: man:chronyd(8)

man:chrony.conf(5)

Process: 5237 ExecStart=/usr/sbin/chronyd $OPTIONS (code=exited, status=0/SUCCESS)

Main PID: 5240 (chronyd)

Tasks: 1 (limit: 12336)

Memory: 828K (peak: 2.8M)

CPU: 36ms

CGroup: /system.slice/chronyd.service

└─5240 /usr/sbin/chronyd -F 2

Feb 15 01:08:08 k8s-node1 systemd[1]: Starting chronyd.service - NTP client/server...

Feb 15 01:08:08 k8s-node1 chronyd[5240]: chronyd version 4.6.1 starting (+CMDMON +NTP +…EBUG)

Feb 15 01:08:08 k8s-node1 chronyd[5240]: Initial frequency -3.765 ppm

Feb 15 01:08:08 k8s-node1 chronyd[5240]: Loaded seccomp filter (level 2)

Feb 15 01:08:08 k8s-node1 systemd[1]: Started chronyd.service - NTP client/server.

Feb 15 01:08:12 k8s-node1 chronyd[5240]: Selected source 192.168.10.10

Hint: Some lines were ellipsized, use -l to show in full.

# 상태 확인

root@k8s-node1:~# timedatectl status

Local time: Sun 2026-02-15 01:08:33 KST

Universal time: Sat 2026-02-14 16:08:33 UTC

RTC time: Sat 2026-02-14 16:08:59

Time zone: Asia/Seoul (KST, +0900)

System clock synchronized: yes

NTP service: active

RTC in local TZ: no

root@k8s-node1:~# chronyc sources -v

.-- Source mode '^' = server, '=' = peer, '#' = local clock.

/ .- Source state '*' = current best, '+' = combined, '-' = not combined,

| / 'x' = may be in error, '~' = too variable, '?' = unusable.

|| .- xxxx [ yyyy ] +/- zzzz

|| Reachability register (octal) -. | xxxx = adjusted offset,

|| Log2(Polling interval) --. | | yyyy = measured offset,

|| \ | | zzzz = estimated error.

|| | | \

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* admin 10 6 17 25 -12us[-1163us] +/- 379us

# [admin-lb] 자신의 NTP Server 를 사용하는 클라이언트 확인

root@admin:~# chronyc clients

Hostname NTP Drop Int IntL Last Cmd Drop Int Last

===============================================================================

k8s-node1 5 0 4 - 11 0 0 - -

k8s-node2 4 0 1 - 9 0 0 - -

DNS Server - Client

[admin] DNS 서버(bind) 설정 : NetworkManager에서 DNS 관리 끄기

# bind 설치

root@admin:~# dnf install -y bind bind-utils

Rocky Linux 10 - BaseOS 2.3 MB/s | 15 MB 00:06

Rocky Linux 10 - AppStream 413 kB/s | 2.2 MB 00:05

Rocky Linux 10 - Extras 1.2 kB/s | 6.2 kB 00:05

Package bind-utils-32:9.18.33-4.el10_0.aarch64 is already installed.

Dependencies resolved.

=============================================================================================================================================================================================

Package Architecture Version Repository Size

=============================================================================================================================================================================================

Installing:

bind aarch64 32:9.18.33-10.el10_1.2 appstream 322 k

Upgrading:

bind-libs aarch64 32:9.18.33-10.el10_1.2 appstream 1.2 M

bind-license noarch 32:9.18.33-10.el10_1.2 appstream 13 k

bind-utils aarch64 32:9.18.33-10.el10_1.2 appstream 233 k

crypto-policies noarch 20250905-2.gitc7eb7b2.el10_1.1 baseos 94 k

crypto-policies-scripts noarch 20250905-2.gitc7eb7b2.el10_1.1 baseos 134 k

openssl aarch64 1:3.5.1-7.el10_1 baseos 1.2 M

openssl-libs aarch64 1:3.5.1-7.el10_1 baseos 2.1 M

Installing dependencies:

openssl-fips-provider aarch64 1:3.5.1-7.el10_1 baseos 717 k

Installing weak dependencies:

bind-dnssec-utils aarch64 32:9.18.33-10.el10_1.2 appstream 150 k

Transaction Summary

=============================================================================================================================================================================================

Install 3 Packages

Upgrade 7 Packages

Total download size: 6.2 M

Downloading Packages:

(1/10): bind-dnssec-utils-9.18.33-10.el10_1.2.aarch64.rpm 65 kB/s | 150 kB 00:02

(2/10): openssl-fips-provider-3.5.1-7.el10_1.aarch64.rpm 301 kB/s | 717 kB 00:02

(3/10): crypto-policies-20250905-2.gitc7eb7b2.el10_1.1.noarch.rpm 1.0 MB/s | 94 kB 00:00

(4/10): crypto-policies-scripts-20250905-2.gitc7eb7b2.el10_1.1.noarch.rpm 2.5 MB/s | 134 kB 00:00

(5/10): bind-9.18.33-10.el10_1.2.aarch64.rpm 132 kB/s | 322 kB 00:02

(6/10): openssl-3.5.1-7.el10_1.aarch64.rpm 9.8 MB/s | 1.2 MB 00:00

(7/10): openssl-libs-3.5.1-7.el10_1.aarch64.rpm 11 MB/s | 2.1 MB 00:00

(8/10): bind-license-9.18.33-10.el10_1.2.noarch.rpm 81 kB/s | 13 kB 00:00

(9/10): bind-utils-9.18.33-10.el10_1.2.aarch64.rpm 602 kB/s | 233 kB 00:00

(10/10): bind-libs-9.18.33-10.el10_1.2.aarch64.rpm 1.7 MB/s | 1.2 MB 00:00

---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Total 984 kB/s | 6.2 MB 00:06

Running transaction check

Transaction check succeeded.

Running transaction test

Transaction test succeeded.

Running transaction

Preparing : 1/1

Running scriptlet: crypto-policies-20250905-2.gitc7eb7b2.el10_1.1.noarch 1/17

Upgrading : crypto-policies-20250905-2.gitc7eb7b2.el10_1.1.noarch 1/17

Running scriptlet: crypto-policies-20250905-2.gitc7eb7b2.el10_1.1.noarch 1/17

Upgrading : openssl-libs-1:3.5.1-7.el10_1.aarch64 2/17

Installing : openssl-fips-provider-1:3.5.1-7.el10_1.aarch64 3/17

Upgrading : bind-license-32:9.18.33-10.el10_1.2.noarch 4/17

Upgrading : bind-libs-32:9.18.33-10.el10_1.2.aarch64 5/17

Upgrading : bind-utils-32:9.18.33-10.el10_1.2.aarch64 6/17

Installing : bind-dnssec-utils-32:9.18.33-10.el10_1.2.aarch64 7/17

Running scriptlet: bind-32:9.18.33-10.el10_1.2.aarch64 8/17

Installing : bind-32:9.18.33-10.el10_1.2.aarch64 8/17

warning: /etc/named.conf created as /etc/named.conf.rpmnew

Running scriptlet: bind-32:9.18.33-10.el10_1.2.aarch64 8/17

Upgrading : openssl-1:3.5.1-7.el10_1.aarch64 9/17

Upgrading : crypto-policies-scripts-20250905-2.gitc7eb7b2.el10_1.1.noarch 10/17

Cleanup : crypto-policies-scripts-20250214-1.gitfd9b9b9.el10_0.1.noarch 11/17

Cleanup : openssl-1:3.2.2-16.el10_0.4.aarch64 12/17

Cleanup : bind-utils-32:9.18.33-4.el10_0.aarch64 13/17

Cleanup : bind-libs-32:9.18.33-4.el10_0.aarch64 14/17

Cleanup : openssl-libs-1:3.2.2-16.el10_0.4.aarch64 15/17

Cleanup : crypto-policies-20250214-1.gitfd9b9b9.el10_0.1.noarch 16/17

Cleanup : bind-license-32:9.18.33-4.el10_0.noarch 17/17

Running scriptlet: crypto-policies-scripts-20250905-2.gitc7eb7b2.el10_1.1.noarch 17/17

Running scriptlet: bind-license-32:9.18.33-4.el10_0.noarch 17/17

Upgraded:

bind-libs-32:9.18.33-10.el10_1.2.aarch64 bind-license-32:9.18.33-10.el10_1.2.noarch bind-utils-32:9.18.33-10.el10_1.2.aarch64

crypto-policies-20250905-2.gitc7eb7b2.el10_1.1.noarch crypto-policies-scripts-20250905-2.gitc7eb7b2.el10_1.1.noarch openssl-1:3.5.1-7.el10_1.aarch64

openssl-libs-1:3.5.1-7.el10_1.aarch64

Installed:

bind-32:9.18.33-10.el10_1.2.aarch64 bind-dnssec-utils-32:9.18.33-10.el10_1.2.aarch64 openssl-fips-provider-1:3.5.1-7.el10_1.aarch64

Complete!

# named.conf 설정

root@admin:~# cat <<EOF > /etc/named.conf

options {

listen-on port 53 { any; };

listen-on-v6 port 53 { ::1; };

directory "/var/named";

dump-file "/var/named/data/cache_dump.db";

statistics-file "/var/named/data/named_stats.txt";

memstatistics-file "/var/named/data/named_mem_stats.txt";

secroots-file "/var/named/data/named.secroots";

recursing-file "/var/named/data/named.recursing";

allow-query { 127.0.0.1; 192.168.10.0/24; };

allow-recursion { 127.0.0.1; 192.168.10.0/24; };

forwarders {

168.126.63.1;

8.8.8.8;

};

recursion yes;

EOF

# 문법 오류 확인 (아무 메시지 없으면 정상)

root@admin:~# named-checkconf /etc/named.conf

# 서비스 활성화 및 시작

root@admin:~# systemctl enable --now named

Created symlink '/etc/systemd/system/multi-user.target.wants/named.service' → '/usr/lib/systemd/system/named.service'.

# DMZ 서버 자체 DNS 설정 (자기 자신 사용)

root@admin:~# echo "nameserver 192.168.10.10" > /etc/resolv.conf

# 확인

root@admin:~# dig +short google.com @192.168.10.10

172.217.221.138

172.217.221.100

172.217.221.101

172.217.221.102

172.217.221.139

172.217.221.113

root@admin:~# dig +short google.com

172.217.221.138

172.217.221.139

172.217.221.102

172.217.221.101

172.217.221.100

172.217.221.113

# NetworkManager에서 DNS 관리 끄기 : 미적용 시, admin 서버 재부팅 시 NetworkManager 가 초기 설정값 덮어쓰움.

root@admin:~# cat /etc/NetworkManager/conf.d/99-dns-none.conf

cat: /etc/NetworkManager/conf.d/99-dns-none.conf: No such file or directory

root@admin:~# cat << EOF > /etc/NetworkManager/conf.d/99-dns-none.conf

[main]

dns=none

EOF

root@admin:~# systemctl restart NetworkManager

[k8s-node] DNS 클라이언트 설정 : NetworkManager에서 DNS 관리 끄기

# NetworkManager에서 DNS 관리 끄기

root@k8s-node1:~# cat /etc/NetworkManager/conf.d/99-dns-none.conf

cat: /etc/NetworkManager/conf.d/99-dns-none.conf: No such file or directory

root@k8s-node1:~# cat << EOF > /etc/NetworkManager/conf.d/99-dns-none.conf

[main]

dns=none

EOF

root@k8s-node1:~# systemctl restart NetworkManager

# DNS 서버 정보 설정

root@k8s-node1:~# echo "nameserver 192.168.10.10" > /etc/resolv.conf

# 확인

root@k8s-node1:~# dig +short google.com @192.168.10.10

172.217.221.113

172.217.221.139

172.217.221.102

172.217.221.101

172.217.221.100

172.217.221.138

root@k8s-node1:~# dig +short google.com

172.217.221.138

172.217.221.101

172.217.221.100

172.217.221.102

172.217.221.139

172.217.221.113

Local (Mirror) YUM/DNF Repository (kubespray offline에 중복 설정되지 않기 위해 삭제)

[admin] Linux 패키지 저장소 : reposync + createrepo + nginx

# 패키지 설치

root@admin:~# dnf install -y dnf-plugins-core createrepo nginx

Last metadata expiration check: 0:09:22 ago on Sun 15 Feb 2026 01:25:46 AM KST.

Package dnf-plugins-core-4.7.0-8.el10.noarch is already installed.

Dependencies resolved.

=============================================================================================================================================================================================

Package Architecture Version Repository Size

=============================================================================================================================================================================================

Installing:

createrepo_c aarch64 1.1.2-4.el10 appstream 75 k

nginx aarch64 2:1.26.3-1.el10 appstream 33 k

Upgrading:

dnf-plugins-core noarch 4.7.0-9.el10 baseos 43 k

python3-dnf-plugins-core noarch 4.7.0-9.el10 baseos 315 k

yum-utils noarch 4.7.0-9.el10 baseos 34 k

Installing dependencies:

createrepo_c-libs aarch64 1.1.2-4.el10 appstream 103 k

nginx-core aarch64 2:1.26.3-1.el10 appstream 652 k

nginx-filesystem noarch 2:1.26.3-1.el10 appstream 11 k

rocky-logos-httpd noarch 100.4-7.el10 appstream 24 k

Transaction Summary

=============================================================================================================================================================================================

Install 6 Packages

Upgrade 3 Packages

Total download size: 1.3 M

Downloading Packages:

(1/9): nginx-1.26.3-1.el10.aarch64.rpm 44 kB/s | 33 kB 00:00

(2/9): createrepo_c-1.1.2-4.el10.aarch64.rpm 99 kB/s | 75 kB 00:00

(3/9): createrepo_c-libs-1.1.2-4.el10.aarch64.rpm 131 kB/s | 103 kB 00:00

(4/9): nginx-filesystem-1.26.3-1.el10.noarch.rpm 177 kB/s | 11 kB 00:00

(5/9): rocky-logos-httpd-100.4-7.el10.noarch.rpm 252 kB/s | 24 kB 00:00

(6/9): nginx-core-1.26.3-1.el10.aarch64.rpm 2.9 MB/s | 652 kB 00:00

(7/9): yum-utils-4.7.0-9.el10.noarch.rpm 120 kB/s | 34 kB 00:00

(8/9): dnf-plugins-core-4.7.0-9.el10.noarch.rpm 100 kB/s | 43 kB 00:00

(9/9): python3-dnf-plugins-core-4.7.0-9.el10.noarch.rpm 708 kB/s | 315 kB 00:00

---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Total 436 kB/s | 1.3 MB 00:02

Running transaction check

Transaction check succeeded.

Running transaction test

Transaction test succeeded.

Running transaction

Preparing : 1/1

Running scriptlet: nginx-filesystem-2:1.26.3-1.el10.noarch 1/12

Installing : nginx-filesystem-2:1.26.3-1.el10.noarch 1/12

Installing : nginx-core-2:1.26.3-1.el10.aarch64 2/12

Upgrading : python3-dnf-plugins-core-4.7.0-9.el10.noarch 3/12

Upgrading : dnf-plugins-core-4.7.0-9.el10.noarch 4/12

Installing : rocky-logos-httpd-100.4-7.el10.noarch 5/12

Installing : createrepo_c-libs-1.1.2-4.el10.aarch64 6/12

Installing : createrepo_c-1.1.2-4.el10.aarch64 7/12

Installing : nginx-2:1.26.3-1.el10.aarch64 8/12

Running scriptlet: nginx-2:1.26.3-1.el10.aarch64 8/12

Upgrading : yum-utils-4.7.0-9.el10.noarch 9/12

Cleanup : yum-utils-4.7.0-8.el10.noarch 10/12

Cleanup : dnf-plugins-core-4.7.0-8.el10.noarch 11/12

Cleanup : python3-dnf-plugins-core-4.7.0-8.el10.noarch 12/12

Running scriptlet: python3-dnf-plugins-core-4.7.0-8.el10.noarch 12/12

Upgraded:

dnf-plugins-core-4.7.0-9.el10.noarch python3-dnf-plugins-core-4.7.0-9.el10.noarch yum-utils-4.7.0-9.el10.noarch

Installed:

createrepo_c-1.1.2-4.el10.aarch64 createrepo_c-libs-1.1.2-4.el10.aarch64 nginx-2:1.26.3-1.el10.aarch64 nginx-core-2:1.26.3-1.el10.aarch64 nginx-filesystem-2:1.26.3-1.el10.noarch

rocky-logos-httpd-100.4-7.el10.noarch

Complete!

# 패키지(저장소) 동기화 (reposync) : 외부 저장소(BaseOS, AppStream 등)의 패키지를 로컬 디렉토리로 가져옵니다.

## 미러 저장 디렉터리 생성

root@admin:~# mkdir -p /data/repos/rocky/10

root@admin:~# cd /data/repos/rocky/10

root@admin:/data/repos/rocky/10# dnf repolist

repo id repo name

appstream Rocky Linux 10 - AppStream

baseos Rocky Linux 10 - BaseOS

extras Rocky Linux 10 - Extras

## baseos

root@admin:/data/repos/rocky/10# dnf reposync --repoid=baseos --download-metadata -p /data/repos/rocky/10

...

(1466/1474): xz-5.6.2-4.el10_0.aarch64.rpm 6.3 MB/s | 476 kB 00:00

(1467/1474): xz-libs-5.6.2-4.el10_0.aarch64.rpm 1.7 MB/s | 109 kB 00:00

(1468/1474): yum-4.20.0-18.el10.rocky.0.1.noarch.rpm 2.1 MB/s | 89 kB 00:00

(1469/1474): yum-utils-4.7.0-9.el10.noarch.rpm 872 kB/s | 34 kB 00:00

(1470/1474): zlib-ng-compat-2.2.3-2.el10.aarch64.rpm 1.6 MB/s | 64 kB 00:00

(1471/1474): zip-3.0-45.el10.aarch64.rpm 4.8 MB/s | 265 kB 00:00

(1472/1474): zlib-ng-compat-2.2.3-3.el10_1.aarch64.rpm 1.2 MB/s | 63 kB 00:00

(1473/1474): zstd-1.5.5-9.el10.aarch64.rpm 4.4 MB/s | 453 kB 00:00

(1474/1474): zsh-5.9-15.el10.aarch64.rpm 25 MB/s | 3.3 MB 00:00

## (참고) 메타데이터 확인 : YUM/DNF 저장소의 핵심 두뇌 역할을 하는 Metadata(메타데이터) 파일들 확인

root@admin:/data/repos/rocky/10# ls -l /data/repos/rocky/10/baseos/repodata/

total 30836

-rw-r--r--. 1 root root 62360 Feb 15 01:37 0fb567b1f4c5f9bb81eed3bafc9f40b252558508fcd362a7fdd6b5e1f4c85e52-comps-BaseOS.aarch64.xml.xz

-rw-r--r--. 1 root root 10561544 Feb 15 01:37 1b34bb7357df23636e2683908e763013b4918a245739769642ebf900f077201d-primary.sqlite.xz

-rw-r--r--. 1 root root 343464 Feb 15 01:37 1de704afeee6d14017ee0a20de05455359ebcb291b70b5fd93020b1f1df76b23-other.sqlite.xz

-rw-r--r--. 1 root root 1631654 Feb 15 01:37 3a1d90c1347a5d6d179d8a779da81b8326d1ca1a7111cfc4bf51d7cef9f831f5-filelists.xml.gz

-rw-r--r--. 1 root root 1082008 Feb 15 01:37 62bf9ca6e90e9fbaf53c10eeb8b08b9d8d2cd0d2ebb04d6e488e9526a19c9982-filelists.sqlite.xz

-rw-r--r--. 1 root root 1624517 Feb 15 01:37 9027e315287283bc4e95bd862e0e09012e4e4aa8e93916708e955731d5462f6d-other.xml.gz

-rw-r--r--. 1 root root 308363 Feb 15 01:37 a55eb7089d714a338318e5acf8e9ff8a682433816e0608a3e0eeefc230153a0e-comps-BaseOS.aarch64.xml

-rw-r--r--. 1 root root 113999 Feb 15 01:37 d5c18881b65c04a309e07af98222226346b5405b7f5b3bb2a4e9a6b9344feb10-updateinfo.xml.gz

-rw-r--r--. 1 root root 15820685 Feb 15 01:37 f49f5be57837217b638f20dbb776b3b1dd5e92daaf3bb0e20c67abf38d2c5e40-primary.xml.gz

-rw-r--r--. 1 root root 4449 Feb 15 01:37 repomd.xml 저장소의 마스터 인덱스입니다. 다른 모든 메타데이터 파일의 위치와 체크섬(Hash) 정보를 담고 있어, 클라이언트가 가장 먼저 다운로드하는 파일입니다.

## appstream

## extras

# 내부 배포용 웹 서버 설정 (nginx)

root@admin:/data/repos/rocky/10# dnf reposync --repoid=appstream --download-metadata -p /data/repos/rocky/10

...

(5274/5279): zlib-ng-compat-devel-2.2.3-2.el10.aarch64.rpm 556 kB/s | 38 kB 00:00

(5275/5279): yggdrasil-0.4.8-2.el10.aarch64.rpm 19 MB/s | 5.2 MB 00:00

(5276/5279): zlib-ng-compat-devel-2.2.3-3.el10_1.aarch64.rpm 343 kB/s | 36 kB 00:00

(5277/5279): zram-generator-1.1.2-14.el10.aarch64.rpm 4.8 MB/s | 399 kB 00:00

(5278/5279): zziplib-0.13.78-2.el10.aarch64.rpm 1.8 MB/s | 89 kB 00:00

(5279/5279): zziplib-utils-0.13.78-2.el10.aarch64.rpm 990 kB/s | 45 kB 00:00

root@admin:/data/repos/rocky/10# du -sh /data/repos/rocky/10/appstream/

15G /data/repos/rocky/10/appstream/

## extras

root@admin:/data/repos/rocky/10# dnf reposync --repoid=extras --download-metadata -p /data/repos/rocky/10

...

(22/26): rpmfusion-free-release-10-1.noarch.rpm 75 kB/s | 9.3 kB 00:00

(23/26): rpmfusion-free-release-tainted-10-1.noarch.rpm 55 kB/s | 7.3 kB 00:00

(24/26): update-motd-0.1-2.el10.noarch.rpm 90 kB/s | 10 kB 00:00

(25/26): rpaste-0.4.1-1.el10.aarch64.rpm 1.2 MB/s | 2.6 MB 00:02

(26/26): rocky-backgrounds-extras-100.4-7.el10.noarch.rpm 20 MB/s | 63 MB 00:03

# 내부 배포용 웹 서버 설정 (nginx)

root@admin:/data/repos/rocky/10# cat <<EOF > /etc/nginx/conf.d/repos.conf

server {

listen 80;

server_name repo-server;

location /rocky/10/ {

autoindex on; # 디렉터리 목록 표시

autoindex_exact_size off; # 파일 크기 KB/MB/GB 단위로 보기 좋게

autoindex_localtime on; # 서버 로컬 타임으로 표시

root /data/repos;

}

}

EOF

root@admin:/data/repos/rocky/10# systemctl enable --now nginx

Created symlink '/etc/systemd/system/multi-user.target.wants/nginx.service' → '/usr/lib/systemd/system/nginx.service'.

root@admin:/data/repos/rocky/10# systemctl status nginx.service --no-pager

● nginx.service - The nginx HTTP and reverse proxy server

Loaded: loaded (/usr/lib/systemd/system/nginx.service; enabled; preset: disabled)

Active: active (running) since Sun 2026-02-15 01:52:21 KST; 10s ago

Invocation: 68a15a1b87cf4bf9a2b4846e6d3713db

Process: 6027 ExecStartPre=/usr/bin/rm -f /run/nginx.pid (code=exited, status=0/SUCCESS)

Process: 6029 ExecStartPre=/usr/sbin/nginx -t (code=exited, status=0/SUCCESS)

Process: 6031 ExecStart=/usr/sbin/nginx (code=exited, status=0/SUCCESS)

Main PID: 6033 (nginx)

Tasks: 5 (limit: 12336)

Memory: 5.9M (peak: 6.8M)

CPU: 36ms

CGroup: /system.slice/nginx.service

├─6033 "nginx: master process /usr/sbin/nginx"

├─6034 "nginx: worker process"

├─6035 "nginx: worker process"

├─6036 "nginx: worker process"

└─6037 "nginx: worker process"

Feb 15 01:52:21 admin systemd[1]: Starting nginx.service - The nginx HTTP and reverse proxy server...

Feb 15 01:52:21 admin nginx[6029]: nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

Feb 15 01:52:21 admin nginx[6029]: nginx: configuration file /etc/nginx/nginx.conf test is successful

Feb 15 01:52:21 admin systemd[1]: Started nginx.service - The nginx HTTP and reverse proxy server.

root@admin:/data/repos/rocky/10# ss -tnlp | grep nginx

LISTEN 0 511 0.0.0.0:80 0.0.0.0:* users:(("nginx",pid=6037,fd=6),("nginx",pid=6036,fd=6),("nginx",pid=6035,fd=6),("nginx",pid=6034,fd=6),("nginx",pid=6033,fd=6))

LISTEN 0 511 [::]:80 [::]:* users:(("nginx",pid=6037,fd=7),("nginx",pid=6036,fd=7),("nginx",pid=6035,fd=7),("nginx",pid=6034,fd=7),("nginx",pid=6033,fd=7))

# 접속 테스트

root@admin:/data/repos/rocky/10# curl http://192.168.10.10/rocky/10/

<html>

<head><title>Index of /rocky/10/</title></head>

<body>

<h1>Index of /rocky/10/</h1><hr><pre><a href="../">../</a>

<a href="appstream/">appstream/</a> 15-Feb-2026 01:43 -

<a href="baseos/">baseos/</a> 15-Feb-2026 01:37 -

<a href="extras/">extras/</a> 15-Feb-2026 01:51 -

</pre><hr></body>

</html>

[k8s-node] 인터넷이 안 되는 내부 서버에서 admin Linux 패키지 저장소를 바라보게 설정

# 기존 레포 설정 백업

root@k8s-node1:~# tree /etc/yum.repos.d/

/etc/yum.repos.d/

├── rocky-addons.repo

├── rocky-devel.repo

├── rocky-extras.repo

└── rocky.repo

1 directory, 4 files

root@k8s-node1:~# mkdir /etc/yum.repos.d/backup

root@k8s-node1:~# mv /etc/yum.repos.d/*.repo /etc/yum.repos.d/backup/

# 로컬 레포 파일 생성: 서버 IP는 Repo 서버의 IP로 수정

## DNF 클라이언트는 HTTP, HTTPS, FTP, file(로컬 파일시스템 직접 접근) 프로토콜로 저장소에 접근할 수 있다.

root@k8s-node1:~# cat <<EOF > /etc/yum.repos.d/internal-rocky.repo

[internal-baseos]

name=Internal Rocky 10 BaseOS

baseurl=http://192.168.10.10/rocky/10/baseos

enabled=1

gpgcheck=0

[internal-appstream]

name=Internal Rocky 10 AppStream

baseurl=http://192.168.10.10/rocky/10/appstream

enabled=1

gpgcheck=0

[internal-extras]

name=Internal Rocky 10 Extras

baseurl=http://192.168.10.10/rocky/10/extras

enabled=1

gpgcheck=0

EOF

# 내부 서버 repo 정상 동작 확인 : 클라이언트에서 캐시를 비우고 목록을 불러옵니다.

root@k8s-node1:~# dnf clean all

0 files removed

root@k8s-node1:~# dnf repolist

repo id repo name

internal-appstream Internal Rocky 10 AppStream

internal-baseos Internal Rocky 10 BaseOS

internal-extras Internal Rocky 10 Extras

root@k8s-node1:~# dnf makecache

Internal Rocky 10 BaseOS 66 MB/s | 15 MB 00:00

Internal Rocky 10 AppStream 16 MB/s | 2.2 MB 00:00

Internal Rocky 10 Extras 38 kB/s | 6.2 kB 00:00

Metadata cache created.

# 패키지 인스톨 정상 실행 확인

root@k8s-node1:~# dnf install -y nfs-utils

Last metadata expiration check: 0:00:23 ago on Sun 15 Feb 2026 01:54:39 AM KST.

Package nfs-utils-1:2.8.2-3.el10.aarch64 is already installed.

Dependencies resolved.

===============================================================================================

Package Architecture Version Repository Size

===============================================================================================

Upgrading:

libnfsidmap aarch64 1:2.8.3-0.el10 internal-baseos 61 k

nfs-utils aarch64 1:2.8.3-0.el10 internal-baseos 476 k

Transaction Summary

===============================================================================================

Upgrade 2 Packages

Total download size: 537 k

Downloading Packages:

(1/2): libnfsidmap-2.8.3-0.el10.aarch64.rpm 5.1 MB/s | 61 kB 00:00

(2/2): nfs-utils-2.8.3-0.el10.aarch64.rpm 30 MB/s | 476 kB 00:00

-----------------------------------------------------------------------------------------------

Total 31 MB/s | 537 kB 00:00

Running transaction check

Transaction check succeeded.

Running transaction test

Transaction test succeeded.

Running transaction

Preparing : 1/1

Upgrading : libnfsidmap-1:2.8.3-0.el10.aarch64 1/4

Running scriptlet: nfs-utils-1:2.8.3-0.el10.aarch64 2/4

Upgrading : nfs-utils-1:2.8.3-0.el10.aarch64 2/4

Running scriptlet: nfs-utils-1:2.8.3-0.el10.aarch64 2/4

Running scriptlet: nfs-utils-1:2.8.2-3.el10.aarch64 3/4

Cleanup : nfs-utils-1:2.8.2-3.el10.aarch64 3/4

Running scriptlet: nfs-utils-1:2.8.2-3.el10.aarch64 3/4

Cleanup : libnfsidmap-1:2.8.2-3.el10.aarch64 4/4

Running scriptlet: libnfsidmap-1:2.8.2-3.el10.aarch64 4/4

Upgraded:

libnfsidmap-1:2.8.3-0.el10.aarch64 nfs-utils-1:2.8.3-0.el10.aarch64

Complete!

## 패키지 정보에 repo 확인

root@k8s-node1:~# dnf info nfs-utils | grep -i repo

Repository : @System

From repo : internal-baseos

[admin] 다음 실습을 위해 삭제

root@admin:/data/repos/rocky/10# systemctl disable --now nginx && dnf remove -y nginx

Removed '/etc/systemd/system/multi-user.target.wants/nginx.service'.

Dependencies resolved.

===============================================================================================================================================================================================

Package Architecture Version Repository Size

===============================================================================================================================================================================================

Removing:

nginx aarch64 2:1.26.3-1.el10 @appstream 120 k

Removing unused dependencies:

nginx-core aarch64 2:1.26.3-1.el10 @appstream 1.8 M

nginx-filesystem noarch 2:1.26.3-1.el10 @appstream 141

rocky-logos-httpd noarch 100.4-7.el10 @appstream 24 k

Transaction Summary

===============================================================================================================================================================================================

Remove 4 Packages

Freed space: 1.9 M

Running transaction check

Transaction check succeeded.

Running transaction test

Transaction test succeeded.

Running transaction

Preparing : 1/1

Running scriptlet: nginx-2:1.26.3-1.el10.aarch64 1/4

Erasing : nginx-2:1.26.3-1.el10.aarch64 1/4

Running scriptlet: nginx-2:1.26.3-1.el10.aarch64 1/4

Erasing : rocky-logos-httpd-100.4-7.el10.noarch 2/4

Erasing : nginx-core-2:1.26.3-1.el10.aarch64 3/4

Erasing : nginx-filesystem-2:1.26.3-1.el10.noarch 4/4

Running scriptlet: nginx-filesystem-2:1.26.3-1.el10.noarch 4/4

Removed:

nginx-2:1.26.3-1.el10.aarch64 nginx-core-2:1.26.3-1.el10.aarch64 nginx-filesystem-2:1.26.3-1.el10.noarch rocky-logos-httpd-100.4-7.el10.noarch

Complete!

Private Container (Image) Registry (kubespray offline에 중복 설정되지 않기 위해 삭제)

[admin] podman 으로 컨테이너 이미지 저장소 Docker Registry (registry) 기동

# podman 설치 : 기본 설치 되어 있음

root@admin:~# dnf info podman | grep repo

From repo : AppStream

# podman 확인

root@admin:~# which podman

/usr/bin/podman

root@admin:~# podman --version

podman version 5.4.0

# Registry 이미지 받기

root@admin:~# podman pull docker.io/library/registry:latest

Trying to pull docker.io/library/registry:latest...

Getting image source signatures

Copying blob a447a5de8f4e done |

Copying blob 92c7580d074a done |

Copying blob 1cc3d49277b7 done |

Copying blob 50b5971fe294 done |

Copying blob 0a52a06d47e0 done |

Copying config 2f5ec5015b done |

Writing manifest to image destination

2f5ec5015badd603680de78accbba6eb3e9146f4d642a7ccef64205e55ac518f

root@admin:~# podman images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/library/registry latest 2f5ec5015bad 2 weeks ago 57.3 MB

# Registry 데이터 저장 디렉터리 준비

root@admin:~# mkdir -p /data/registry

root@admin:~# chmod 755 /data/registry

# Docker Registry 컨테이너 실행 (기본, 인증 없음)

root@admin:~# podman run -d --name local-registry -p 5000:5000 -v /data/registry:/var/lib/registry --restart=always docker.io/library/registry:latest

177c986039f32b024f720c1059dcc8a38931cacd0f49ce7ff901bd1c8314a548

# 확인

root@admin:~# podman ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

177c986039f3 docker.io/library/registry:latest /etc/distribution... 14 seconds ago Up 15 seconds 0.0.0.0:5000->5000/tcp local-registry

root@admin:~# pstree -a

systemd --switched-root --system --deserialize=46 no_timer_check

├─NetworkManager --no-daemon

│ └─3*[{NetworkManager}]

├─VBoxService --pidfile /var/run/vboxadd-service.sh

│ └─8*[{VBoxService}]

├─agetty -o -- \\u --noreset --noclear - linux

├─anacron -s

├─atd -f

├─auditd

│ ├─sedispatch

│ └─2*[{auditd}]

├─chronyd -F 2

├─conmon --api-version 1 -c 177c986039f32b024f720c1059dcc8a38931cacd0f49ce7ff901bd1c8314a548 -u 177c986039f32b024f720c1059dcc8a38931cacd0f49ce7ff901bd1c8314a548 -r /usr/bin/crun -b/va

│ └─registry serve /etc/distribution/config.yml

│ └─6*[{registry}]

├─crond -n

├─dbus-broker-lau --scope system --audit

│ └─dbus-broker --log 4 --controller 9 --machine-id 36e9c95128f6493a896149dc465c48d9 --max-bytes 536870912 --max-fds 4096 --max-matches 131072 --audit

├─fwupd

│ └─5*[{fwupd}]

├─gpg-agent --homedir /var/lib/fwupd/gnupg --use-standard-socket --daemon

│ ├─scdaemon --multi-server --homedir /var/lib/fwupd/gnupg

│ │ └─{scdaemon}

│ └─{gpg-agent}

├─gssproxy -i

│ └─5*[{gssproxy}]

├─irqbalance

│ └─{irqbalance}

├─lsmd -d

├─named -u named -c /etc/named.conf

│ └─13*[{named}]

├─packagekitd

│ └─3*[{packagekitd}]

├─polkitd --no-debug --log-level=err

│ └─3*[{polkitd}]

├─rpcbind -w -f

├─rsyslogd -n

│ └─2*[{rsyslogd}]

├─sshd

├─sshd-session

│ └─sshd-session

│ └─bash

│ └─pstree -a

├─systemd --user

│ └─(sd-pam)

├─systemd-journal

├─systemd-logind

├─systemd-udevd

├─systemd-userdbd

│ ├─systemd-userwor

│ ├─systemd-userwor

│ └─systemd-userwor

├─tuned -Es /usr/sbin/tuned -l -P

│ └─3*[{tuned}]

└─udisksd

└─6*[{udisksd}]

# Registry 정상 동작 확인

root@admin:~# curl -s http://localhost:5000/v2/_catalog | jq

{

"repositories": []

}

[admin] 컨테이너 이미지 저장소 Docker Registry 에 이미지 push

# 이미지 가져오기

root@admin:~# podman pull alpine

Resolved "alpine" as an alias (/etc/containers/registries.conf.d/000-shortnames.conf)

Trying to pull docker.io/library/alpine:latest...

Getting image source signatures

Copying blob d8ad8cd72600 done |

Copying config 1ab49c19c5 done |

Writing manifest to image destination

1ab49c19c53ebca95c787b482aeda86d1d681f58cdf19278c476bcaf37d96de1

root@admin:~# cat /etc/containers/registries.conf.d/000-shortnames.conf | grep alpine

"alpine" = "docker.io/library/alpine"

root@admin:~# podman images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/library/registry latest 2f5ec5015bad 2 weeks ago 57.3 MB

docker.io/library/alpine latest 1ab49c19c53e 2 weeks ago 8.99 MB

# tag

root@admin:~# podman tag alpine:latest 192.168.10.10:5000/alpine:1.0

root@admin:~# podman images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/library/registry latest 2f5ec5015bad 2 weeks ago 57.3 MB

192.168.10.10:5000/alpine 1.0 1ab49c19c53e 2 weeks ago 8.99 MB

docker.io/library/alpine latest 1ab49c19c53e 2 weeks ago 8.99 MB

# 프라이빗 레지스트리에 업로드 : 실패!

root@admin:~# podman push 192.168.10.10:5000/alpine:1.0

Getting image source signatures

Error: trying to reuse blob sha256:45f3ea5848e8a25ca27718b640a21ffd8c8745d342a24e1d4ddfc8c449b0a724 at destination: pinging container registry 192.168.10.10:5000: Get "https://192.168.10.10:5000/v2/": http: server gave HTTP response to HTTPS client

# 기본적으로 컨테이너 엔진들은 HTTPS를 요구합니다. 내부망에서 HTTP로 테스트하려면 Registry 주소를 '안전하지 않은 저장소'로 등록해야 합니다.

# (참고) registries.conf 는 containers-common 설정이라서, 'podman, skopeo, buildah' 등 전부 동일하게 적용됨.

root@admin:~# cp /etc/containers/registries.conf /etc/containers/registries.bak

root@admin:~# cat <<EOF >> /etc/containers/registries.conf

[[registry]]

location = "192.168.10.10:5000"

insecure = true

EOF

root@admin:~# grep "^[^#]" /etc/containers/registries.conf

unqualified-search-registries = ["registry.access.redhat.com", "registry.redhat.io", "docker.io"]

short-name-mode = "enforcing"

[[registry]]

location = "192.168.10.10:5000"

insecure = true

# 프라이빗 레지스트리에 업로드 : 성공!

root@admin:~# podman push 192.168.10.10:5000/alpine:1.0

Getting image source signatures

Copying blob 45f3ea5848e8 done |

Copying config 1ab49c19c5 done |

Writing manifest to image destination

# 업로드된 이미지와 태그 조회

root@admin:~# curl -s 192.168.10.10:5000/v2/_catalog | jq

{

"repositories": [

"alpine"

]

}

root@admin:~# curl -s 192.168.10.10:5000/v2/alpine/tags/list | jq

{

"name": "alpine",

"tags": [

"1.0"

]

}

[k8s-node] 컨테이너 이미지 pull

# registries.conf 설정

root@k8s-node1:~# cp /etc/containers/registries.conf /etc/containers/registries.bak

root@k8s-node1:~# cat <<EOF >> /etc/containers/registries.conf

[[registry]]

location = "192.168.10.10:5000"

insecure = true

EOF

root@k8s-node1:~# grep "^[^#]" /etc/containers/registries.conf

unqualified-search-registries = ["registry.access.redhat.com", "registry.redhat.io", "docker.io"]

short-name-mode = "enforcing"

[[registry]]

location = "192.168.10.10:5000"

insecure = true

# 이미지 가져오기

root@k8s-node1:~# podman pull 192.168.10.10:5000/alpine:1.0

Trying to pull 192.168.10.10:5000/alpine:1.0...

Getting image source signatures

Copying blob 9268c2c682e1 done |

Copying config 1ab49c19c5 done |

Writing manifest to image destination

1ab49c19c53ebca95c787b482aeda86d1d681f58cdf19278c476bcaf37d96de1

root@k8s-node1:~# podman images

REPOSITORY TAG IMAGE ID CREATED SIZE

192.168.10.10:5000/alpine 1.0 1ab49c19c53e 2 weeks ago 8.98 MB

[admin] 다음 실습을 위해 삭제

root@admin:~# podman rm -f local-registry

local-registry

[admin, k8s-node] 다음 실습을 위해 파일 원복

root@admin:~# mv /etc/containers/registries.bak /etc/containers/registries.conf

mv: overwrite '/etc/containers/registries.conf'? yes

root@k8s-node1:~# mv /etc/containers/registries.bak /etc/containers/registries.conf

mv: overwrite '/etc/containers/registries.conf'? yes

root@k8s-node2:~# mv /etc/containers/registries.bak /etc/containers/registries.conf

mv: overwrite '/etc/containers/registries.conf'? yes

Private PyPI (Python Package Index) Mirror (kubespray offline에 중복 설정되지 않기 위해 삭제)

[admin] Python 패키지 저장소 구성 : devpi-server

# devpi-server 설치

## devpi-server : PyPI 미러/사설 패키지 저장소 서버

## devpi-client : devpi 서버에 패키지 업로드/관리

## devpi-web : 웹 UI (선택)

root@admin:~# pip install devpi-server devpi-client devpi-web

...

Building wheels for collected packages: pyramid-chameleon

Building wheel for pyramid-chameleon (pyproject.toml) ... done

Created wheel for pyramid-chameleon: filename=pyramid_chameleon-0.3-py3-none-any.whl size=14231 sha256=c0393e2f98c5189b0125c91cebab83898944328e7783fff3009f1bbacfbba356

Stored in directory: /root/.cache/pip/wheels/22/ef/bd/de2f48264f520e5e093528f6a71ce0b8b593413c78182b950d

Successfully built pyramid-chameleon

Installing collected packages: Whoosh, translationstring, repoze.lru, passlib, zope.interface, zope.deprecation, webob, waitress, venusian, typing-extensions, soupsieve, ruamel.yaml, pyproject_hooks, pygments, pycparser, py, pluggy, platformdirs, plaster, pkginfo, PasteDeploy, packaging-legacy, nh3, lazy, itsdangerous, iniconfig, hupper, h11, docutils, defusedxml, Chameleon, certifi, strictyaml, readme-renderer, plaster-pastedeploy, httpcore, devpi_common, cffi, build, beautifulsoup4, anyio, pyramid, httpx, cmarkgfm, check-manifest, argon2-cffi-bindings, pyramid-chameleon, devpi-client, argon2-cffi, devpi-server, devpi-web

Successfully installed Chameleon-4.6.0 PasteDeploy-3.1.0 Whoosh-2.7.4 anyio-4.12.1 argon2-cffi-25.1.0 argon2-cffi-bindings-25.1.0 beautifulsoup4-4.14.3 build-1.4.0 certifi-2026.1.4 cffi-2.0.0 check-manifest-0.51 cmarkgfm-2025.10.22 defusedxml-0.7.1 devpi-client-7.2.0 devpi-server-6.19.1 devpi-web-5.0.1 devpi_common-4.1.1 docutils-0.22.4 h11-0.16.0 httpcore-1.0.9 httpx-0.28.1 hupper-1.12.1 iniconfig-2.3.0 itsdangerous-2.2.0 lazy-1.6 nh3-0.3.3 packaging-legacy-23.0.post0 passlib-1.7.4 pkginfo-1.12.1.2 plaster-1.1.2 plaster-pastedeploy-1.0.1 platformdirs-4.8.0 pluggy-1.6.0 py-1.11.0 pycparser-3.0 pygments-2.19.2 pyproject_hooks-1.2.0 pyramid-2.0.2 pyramid-chameleon-0.3 readme-renderer-44.0 repoze.lru-0.7 ruamel.yaml-0.19.1 soupsieve-2.8.3 strictyaml-1.7.3 translationstring-1.4 typing-extensions-4.15.0 venusian-3.1.1 waitress-3.0.2 webob-1.8.9 zope.deprecation-6.0 zope.interface-8.2

root@admin:~# pip list | grep devpi

devpi-client 7.2.0

devpi-common 4.1.1

devpi-server 6.19.1

devpi-web 5.0.1

# 서버 데이터 디렉토리 생성 및 초기화 : Initialize new devpi-server instance.

## --serverdir을 지정하지 않으면 기본값은 ~/.devpi/server 입니다.

root@admin:~# devpi-init --serverdir /data/devpi_data

2026-02-15 02:13:10,380 INFO NOCTX Loading node info from /data/devpi_data/.nodeinfo

2026-02-15 02:13:10,380 INFO NOCTX generated uuid: 35e9b6a4076e42e194ddb36a6683bc39

2026-02-15 02:13:10,381 INFO NOCTX wrote nodeinfo to: /data/devpi_data/.nodeinfo

2026-02-15 02:13:10,384 INFO NOCTX DB: Creating schema

2026-02-15 02:13:10,449 INFO [Wtx-1] setting password for user 'root'

2026-02-15 02:13:10,449 INFO [Wtx-1] created user 'root'

2026-02-15 02:13:10,449 INFO [Wtx-1] created root user

2026-02-15 02:13:10,449 INFO [Wtx-1] created root/pypi index

2026-02-15 02:13:10,455 INFO [Wtx-1] fswriter0: committed at 0

root@admin:~# ls -al /data/devpi_data/

total 28

drwxr-xr-x. 2 root root 60 Feb 15 02:13 .

drwxr-xr-x. 5 root root 53 Feb 15 02:13 ..

-rw-------. 1 root root 72 Feb 15 02:13 .nodeinfo

-rw-r--r--. 1 root root 1 Feb 15 02:13 .serverversion

-rw-r--r--. 1 root root 20480 Feb 15 02:13 .sqlite

# devpi 서버 기동 : --host 0.0.0.0은 외부(다른 PC) 접속을 허용하기 위함

## 백그라운드 상시 구동은 systemd 서비스로 등록 설정 할 것

root@admin:~# nohup devpi-server --serverdir /data/devpi_data --host 0.0.0.0 --port 3141 > /var/log/devpi.log 2>&1 &

[1] 6803

# 확인

root@admin:~# ss -tnlp | grep devpi-server

LISTEN 0 1024 0.0.0.0:3141 0.0.0.0:* users:(("devpi-server",pid=6803,fd=12))

root@admin:~# tail -f /var/log/devpi.log

2026-02-15 02:13:42,857 INFO [NOTI] [Rtx0] triggering load of initial projectnames for root/pypi

2026-02-15 02:13:42,861 INFO NOCTX devpi-server version: 6.19.1

2026-02-15 02:13:42,861 INFO NOCTX serverdir: /data/devpi_data

2026-02-15 02:13:42,861 INFO NOCTX uuid: 35e9b6a4076e42e194ddb36a6683bc39

2026-02-15 02:13:42,861 INFO NOCTX serving at url: http://0.0.0.0:3141 (might be http://[0.0.0.0]:3141 for IPv6)

2026-02-15 02:13:42,861 INFO NOCTX using 50 threads

2026-02-15 02:13:42,861 INFO NOCTX bug tracker: https://github.com/devpi/devpi/issues

2026-02-15 02:13:42,861 INFO NOCTX Hit Ctrl-C to quit.

2026-02-15 02:13:42,887 INFO Serving on http://0.0.0.0:3141

2026-02-15 02:13:46,774 INFO [IDX] Committing 2500 new documents to search index.

2026-02-15 02:14:04,016 INFO [Wtx0] Processed a total of 741856 projects and queued 741856

2026-02-15 02:14:04,026 INFO [Wtx0] fswriter1: committed at 1

2026-02-15 02:14:04,029 INFO [IDXQ] Thread 'InitialQueueThread' ended

2026-02-15 02:14:04,033 INFO [NOTI] [Rtx1] indexing 'root/pypi' mirror with 741856 projects

2026-02-15 02:14:04,923 INFO [IDX] Indexer queue size ~ 299

2026-02-15 02:14:06,589 INFO [IDX] Committing 2500 new documents to search index.

[admin] devpi-server 필요한 패키지 업로드

# 서버 연결

root@admin:~# devpi use http://192.168.10.10:3141

Warning: insecure http host, trusted-host will be set for pip

using server: http://192.168.10.10:3141/ (not logged in)

no current index: type 'devpi use -l' to discover indices

/root/.config/pip/pip.conf: no config file exists

/root/.config/uv/uv.toml: no config file exists

/root/.pydistutils.cfg: no config file exists

/root/.buildout/default.cfg: no config file exists

always-set-cfg: no

# 로그인 (기본 root 비번은 없음)

root@admin:~# devpi login root --password ""

logged in 'root', credentials valid for 10.00 hours

# 폐쇄망에서 쓸 패키지 미리 받아두기

root@admin:~# pip download jmespath netaddr -d /tmp/pypi-packages

Collecting jmespath

Downloading jmespath-1.1.0-py3-none-any.whl.metadata (7.6 kB)

Collecting netaddr

Downloading netaddr-1.3.0-py3-none-any.whl.metadata (5.0 kB)

Downloading jmespath-1.1.0-py3-none-any.whl (20 kB)

Downloading netaddr-1.3.0-py3-none-any.whl (2.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 2.3/2.3 MB 6.3 MB/s eta 0:00:00

Saved /tmp/pypi-packages/jmespath-1.1.0-py3-none-any.whl

Saved /tmp/pypi-packages/netaddr-1.3.0-py3-none-any.whl

Successfully downloaded jmespath netaddr

root@admin:~# tree /tmp/pypi-packages/

/tmp/pypi-packages/

├── jmespath-1.1.0-py3-none-any.whl

└── netaddr-1.3.0-py3-none-any.whl

1 directory, 2 files

# 팀 또는 프로젝트용 인덱스 생성 (예: prod)

## 인덱스 체이닝 : 상속(Inheritance) - bases=root/pypi 설정을 통해, 외부망이 연결된 환경에서는 자동으로 PyPI에서 캐싱해오고, 폐쇄망에서는 내부 업로드 파일을 우선 조회하도록 구성할 수 있습니다.

root@admin:~# devpi index -c prod bases=root/pypi

http://192.168.10.10:3141/root/prod?no_projects=:

type=stage

bases=root/pypi

volatile=True

acl_upload=root

acl_toxresult_upload=:ANONYMOUS:

mirror_whitelist=

mirror_whitelist_inheritance=intersection

# devpi 인덱스(저장소) 목록 확인

root@admin:~# devpi index -l

root/prod

root/pypi

# 특정 인덱스(저장소)에 패키지 있는지 확인

root@admin:~# devpi use root/prod

Warning: insecure http host, trusted-host will be set for pip

current devpi index: http://192.168.10.10:3141/root/prod (logged in as root)

supported features: push-no-docs, push-only-docs, push-register-project, server-keyvalue-parsing

/root/.config/pip/pip.conf: no config file exists

/root/.config/uv/uv.toml: no config file exists

/root/.pydistutils.cfg: no config file exists

/root/.buildout/default.cfg: no config file exists

always-set-cfg: no

# devpi 서버 root/prod 인덱스(저장소)에 패키지 업로드

root@admin:~# devpi upload /tmp/pypi-packages/*

file_upload of jmespath-1.1.0-py3-none-any.whl to http://192.168.10.10:3141/root/prod/

file_upload of netaddr-1.3.0-py3-none-any.whl to http://192.168.10.10:3141/root/prod/

# 업로드 한 패키지 실제 위치 확인

root@admin:~# tree /data/devpi_data/+files/

/data/devpi_data/+files/

└── root

└── prod

└── +f

├── a56

│ └── 63118de4908c9

│ └── jmespath-1.1.0-py3-none-any.whl

└── c2c

└── 6a8ebe5554ce3

└── netaddr-1.3.0-py3-none-any.whl

8 directories, 2 files

[k8s-node] pip 설정 및 사용

# 일회성 사용

root@k8s-node1:~# pip list | grep -i jmespath

root@k8s-node1:~# pip install jmespath --index-url http://192.168.10.10:3141/root/prod/+simple --trusted-host 192.168.10.10

Looking in indexes: http://192.168.10.10:3141/root/prod/+simple

Collecting jmespath

Downloading http://192.168.10.10:3141/root/prod/%2Bf/a56/63118de4908c9/jmespath-1.1.0-py3-none-any.whl (20 kB)

Installing collected packages: jmespath

Successfully installed jmespath-1.1.0

WARNING: Running pip as the 'root' user can result in broken permissions and conflicting behaviour with the system package manager. It is recommended to use a virtual environment instead: https://pip.pypa.io/warnings/venv

root@k8s-node1:~# pip list | grep -i jmespath

jmespath 1.1.0

# 현재 devpi-server 없는 패키지 설치 시도 : 성공!

root@k8s-node1:~# pip install cryptography

[admin] 다음 실습을 위해 삭제

root@admin:~# pkill -f "devpi-server --serverdir /data/devpi_data"

[k8s-node] 다음 실습을 위해 삭제

root@k8s-node1:~# rm -rf /etc/pip.conf

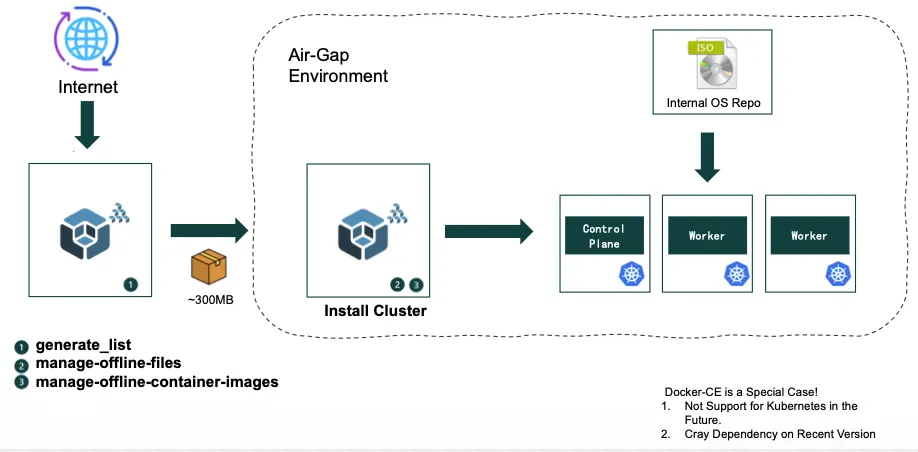

kubespray offline 설치 소개

kubespray는 오프라인 환경에서 k8s를 배포를 위한 편의성을 지원

- 다운로드될 파일 목록과 컨테이너 이미지 목록을 파일별로 생성

- 오프라인 배포를 위한 컨테이너 이미지 다운로드 및 이미지 레지스트리(저장소)에 등록(업로드)

- 파일(목록)을 다운로드 하고 Nginx 컨테이너를 실행하여, 파일 다운로드 기능 제공

kubespray offline 설치 실습

온라인 환경에서 download-all.sh로 모든 것을 다운로드

→ outputs를 오프라인 노드로 이동

→ 타겟 스크립트들로 로컬 인프라(nginx, registry) 구성

→ kubespray로 클러스터 배포

# download-all.sh

#!/bin/bash

run() {

echo "=> Running: $*"

$* || {

echo "Failed in : $*"

exit 1

}

}

source ./config.sh

#run ./install-docker.sh

#run ./install-nerdctl.sh

run ./precheck.sh

run ./prepare-pkgs.sh || exit 1

run ./prepare-py.sh

run ./get-kubespray.sh

if $ansible_in_container; then

run ./build-ansible-container.sh

else

run ./pypi-mirror.sh

fi

run ./download-kubespray-files.sh

run ./download-additional-containers.sh

run ./create-repo.sh

run ./copy-target-scripts.sh

echo "Done."

download-all.sh 스크립트에 하위 스크립트는 다음과 같습니다.

1. prepare-pkgs.sh

시스템 패키지 준비. Python, podman(또는 containerd/nerdctl) 등 오프라인 다운로드에 필요한 기본 시스템 패키지들을 설치합니다.

2. prepare-py.sh

Python 환경 구성. Python venv(가상환경)를 생성하고, kubespray가 요구하는 Python 패키지들을 설치합니다.

3. get-kubespray.sh

Kubespray 소스 다운로드. KUBESPRAY_DIR이 존재하지 않으면 kubespray 소스를 다운로드하고 압축을 해제합니다. 필요한 패치도 적용합니다.

4. 조건 분기: build-ansible-container.sh 또는 pypi-mirror.sh

- ansible_in_container가 true이면 → build-ansible-container.sh: Ansible 실행용 컨테이너 이미지를 빌드합니다.

- ansible_in_container가 false이면 → pypi-mirror.sh: kubespray가 필요로 하는 Python 패키지들의 PyPI 미러를 로컬에 구성합니다. 오프라인 환경에서 pip install이 가능하도록 하기 위함입니다.

5. download-kubespray-files.sh

핵심 바이너리 및 컨테이너 이미지 다운로드. 내부적으로 kubespray의 generate_list.sh를 실행하여 files.list(바이너리 URL 목록)와 images.list(컨테이너 이미지 목록)를 생성한 뒤, 해당 바이너리 파일들(kubeadm, kubelet, kubectl, CNI 플러그인, etcd, crictl 등)을 다운로드합니다. 컨테이너 이미지는 내부적으로 download-images.sh를 호출하여 pull & save합니다.

6. download-additional-containers.sh

추가 컨테이너 이미지 다운로드. imagelists/*.txt 파일에 기재된 추가 컨테이너 이미지들을 다운로드합니다. 사용자가 커스텀 이미지(예: 모니터링, 로깅 등)를 추가하고 싶을 때 이 텍스트 파일에 repoTag를 넣으면 됩니다.

7. create-repo.sh

OS 패키지 리포지토리 생성. 대상 OS에 따라 RPM(RHEL/Rocky/Alma) 또는 DEB(Ubuntu) 패키지들을 다운로드하여 로컬 리포지토리를 만듭니다. 오프라인 노드에서 yum/apt로 패키지 설치가 가능하게 합니다.

8. copy-target-scripts.sh

타겟 노드용 스크립트 복사. 오프라인 환경의 타겟 노드에서 실행할 스크립트들을 outputs/ 디렉토리로 복사합니다.

모든 아티팩트는 ./outputs/ 디렉토리에 저장되며, 이 디렉토리를 오프라인 타겟 노드로 옮긴 뒤 아래 스크립트들을 순서대로 실행하여 클러스터를 구성합니다.

download-all.sh의 하위 스크립트들은 인터넷이 되는 온라인 머신에서 실행됩니다. 목적은 오프라인 설치에 필요한 모든 파일(바이너리, 컨테이너 이미지, OS 패키지, PyPI 패키지 등)을 미리 다운로드해서 ./outputs/ 디렉토리에 모아두는 것입니다.

타겟 노드 스크립트들은 ./outputs/ 디렉토리를 USB나 SCP 등으로 옮긴 뒤, 인터넷이 안 되는 오프라인 노드에서 실행됩니다. 목적은 다운로드해둔 파일들을 이용해 로컬 인프라(nginx 파일서버, 프라이빗 레지스트리 등)를 구축하는 것입니다.

outputs 디렉터리 이동 후 setup-container.sh 실행 : 추가로 install-containerd.sh 실행될 때 하위 스크립트들은 다음과 같습니다.

# setup-container.sh

#!/bin/bash

# install containerd

./install-containerd.sh

# Load images

echo "==> Load registry, nginx images"

NERDCTL=/usr/local/bin/nerdctl

cd ./images

for f in docker.io_library_registry-*.tar.gz docker.io_library_nginx-*.tar.gz; do

sudo $NERDCTL load -i $f || exit 1

done

if [ -f kubespray-offline-container.tar.gz ]; then

sudo $NERDCTL load -i kubespray-offline-container.tar.gz || exit 1

fi

1. setup-container.sh

containerd를 로컬 파일로 설치하고, nginx/registry 컨테이너 이미지를 containerd에 로드합니다.

2. start-nginx.sh

nginx 컨테이너를 시작하여 바이너리 파일 및 OS 패키지를 서빙하는 로컬 파일서버 구동합니다.

3. setup-offline.sh

yum/deb repo 경로와 PyPI 미러 설정을 로컬 nginx 서버를 바라보도록 변경합니다.

4. setup-py.sh

로컬 repo에서 python3와 venv를 설치합니다.

5. start-registry.sh

Docker 프라이빗 레지스트리 컨테이너를 시작합니다.

6. load-push-images.sh

저장된 컨테이너 이미지를 containerd에 로드한 뒤 프라이빗 레지스트리에 tag & push합니다.

# setup-container.sh 실행

root@admin:~/kubespray-offline/outputs# ./setup-container.sh

# start-nginx.sh 실행

root@admin:~/kubespray-offline/outputs# ./start-nginx.sh

===> Stop nginx

===> Start nginx

db995aecc4a109d1eb492b334507615ebf79d4f4106fa16289e472dfa51a5d3d

root@admin:~/kubespray-offline/outputs# nerdctl ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

db995aecc4a1 docker.io/library/nginx:1.29.4 "/docker-entrypoint.…" 14 seconds ago Up nginx

root@admin:~/kubespray-offline/outputs# ss -tnlp | grep nginx

LISTEN 0 511 0.0.0.0:80 0.0.0.0:* users:(("nginx",pid=21689,fd=6),("nginx",pid=21688,fd=6),("nginx",pid=21687,fd=6),("nginx",pid=21686,fd=6),("nginx",pid=21652,fd=6))

LISTEN 0 511 [::]:80 [::]:* users:(("nginx",pid=21689,fd=7),("nginx",pid=21688,fd=7),("nginx",pid=21687,fd=7),("nginx",pid=21686,fd=7),("nginx",pid=21652,fd=7))

# setup-offline.sh 실행

root@admin:~/kubespray-offline/outputs# dnf repolist

repo id repo name

appstream Rocky Linux 10 - AppStream

baseos Rocky Linux 10 - BaseOS

extras Rocky Linux 10 - Extras

root@admin:~/kubespray-offline/outputs# cat /etc/redhat-release

Rocky Linux release 10.0 (Red Quartz)

# 스크립트 실행 : Setup yum/deb repo config and PyPI mirror config to use local nginx server.

root@admin:~/kubespray-offline/outputs# ./setup-offline.sh

/bin/rm: cannot remove '/etc/yum.repos.d/offline.repo': No such file or directory

===> Disable all yumrepositories

===> Setup local yum repository

[offline-repo]

name=Offline repo

baseurl=http://localhost/rpms/local/

enabled=1

gpgcheck=0

===> Setup PyPI mirror

# 기존 repo 이름이 .original로 변경되고, offline.repo 추가 확인

root@admin:~/kubespray-offline/outputs# tree /etc/yum.repos.d/

/etc/yum.repos.d/

├── offline.repo

├── rocky-addons.repo.original

├── rocky-devel.repo.original

├── rocky-extras.repo.original

└── rocky.repo.original

1 directory, 5 files

# offline.repo 파일 확인

root@admin:~/kubespray-offline/outputs# cat /etc/yum.repos.d/offline.repo

[offline-repo]

name=Offline repo

baseurl=http://localhost/rpms/local/

enabled=1

gpgcheck=0

# offline repo 확인

root@admin:~/kubespray-offline/outputs# dnf clean all

18 files removed

root@admin:~/kubespray-offline/outputs# dnf repolist

repo id repo name

offline-repo Offline repo

# pip 전역 설정 : pypi mirror 설정 확인

root@admin:~/kubespray-offline/outputs# cat ~/.config/pip/pip.conf

[global]

index = http://localhost/pypi/

index-url = http://localhost/pypi/

trusted-host = localhost

# setup-py.sh 실행

root@admin:~/kubespray-offline/outputs# ./setup-py.sh

===> Install python, venv, etc

Offline repo 11 MB/s | 85 kB 00:00

Package python3-3.12.12-3.el10_1.aarch64 is already installed.

Dependencies resolved.

Nothing to do.

Complete!

# 변수 확인

root@admin:~/kubespray-offline/outputs# source pyver.sh

root@admin:~/kubespray-offline/outputs# echo -e "python_version $python${PY}"

python_version 3.12

# offline-repo 에 패키지 파일 확인

root@admin:~/kubespray-offline/outputs# dnf info python3

Last metadata expiration check: 0:00:39 ago on Sun 15 Feb 2026 03:56:17 AM KST.

Installed Packages

Name : python3

Version : 3.12.12

Release : 3.el10_1

Architecture : aarch64

Size : 83 k

Source : python3.12-3.12.12-3.el10_1.src.rpm

Repository : @System

From repo : baseos

Summary : Python 3.12 interpreter

URL : https://www.python.org/

License : Python-2.0.1

Description : Python 3.12 is an accessible, high-level, dynamically typed, interpreted

: programming language, designed with an emphasis on code readability.

: It includes an extensive standard library, and has a vast ecosystem of

: third-party libraries.

:

: The python3 package provides the "python3" executable: the reference

: interpreter for the Python language, version 3.

: The majority of its standard library is provided in the python3-libs package,

: which should be installed automatically along with python3.

: The remaining parts of the Python standard library are broken out into the

: python3-tkinter and python3-test packages, which may need to be installed

: separately.

:

: Documentation for Python is provided in the python3-docs package.

:

: Packages containing additional libraries for Python are generally named with

: the "python3-" prefix.

root@admin:~/kubespray-offline/outputs# tree rpms/local/ | grep -i python

├── libcap-ng-python3-0.8.4-6.el10.aarch64.rpm

├── python3-3.12.12-3.el10_1.aarch64.rpm

├── python3-dateutil-2.9.0.post0-1.el10_0.noarch.rpm

├── python3-dbus-1.3.2-8.el10.aarch64.rpm

├── python3-devel-3.12.12-3.el10_1.aarch64.rpm

├── python3-dnf-4.20.0-18.el10.rocky.0.1.noarch.rpm

├── python3-dnf-plugins-core-4.7.0-9.el10.noarch.rpm

├── python3-dnf-plugin-versionlock-4.7.0-9.el10.noarch.rpm

├── python3-firewall-2.3.1-1.el10_0.noarch.rpm

├── python3-gobject-base-3.46.0-7.el10.aarch64.rpm

├── python3-gobject-base-noarch-3.46.0-7.el10.noarch.rpm

├── python3-hawkey-0.73.1-12.el10.rocky.0.1.aarch64.rpm

├── python3-libcomps-0.1.21-3.el10.aarch64.rpm

├── python3-libdnf-0.73.1-12.el10.rocky.0.1.aarch64.rpm

├── python3-libs-3.12.12-3.el10_1.aarch64.rpm

├── python3-libselinux-3.9-1.el10.aarch64.rpm

├── python3-nftables-1.1.1-6.el10.aarch64.rpm

├── python3-pip-23.3.2-7.el10.noarch.rpm

├── python3-pip-wheel-23.3.2-7.el10.noarch.rpm

├── python3-rpm-4.19.1.1-20.el10.aarch64.rpm

├── python3-six-1.16.0-16.el10.noarch.rpm

├── python3-systemd-235-11.el10.aarch64.rpm

├── python-unversioned-command-3.12.12-3.el10_1.noarch.rpm

# start-registry.sh 실행

root@admin:~/kubespray-offline/outputs# ./start-registry.sh

===> Start registry

0af8f147ba4fd2efc9b09f8a1adbbfe9a59c2dd29e6b7ea6b4d07aea6613c671

# 관련 변수 확인

root@admin:~/kubespray-offline/outputs# source config.sh

root@admin:~/kubespray-offline/outputs# echo -e "registry_port: $REGISTRY_PORT"

registry_port: 35000

# 확인

root@admin:~/kubespray-offline/outputs# nerdctl ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

0af8f147ba4f docker.io/library/registry:3.0.0 "/entrypoint.sh /etc…" 34 seconds ago Up registry

db995aecc4a1 docker.io/library/nginx:1.29.4 "/docker-entrypoint.…" 5 minutes ago Up nginx

root@admin:~/kubespray-offline/outputs# ss -tnlp | grep registry

LISTEN 0 4096 *:35000 *:* users:(("registry",pid=21814,fd=7))

LISTEN 0 4096 *:5001 *:* users:(("registry",pid=21814,fd=3))

# 현재는 registry 서버 내부에 저장된 (컨테이너) 이미지 없는 상태 : (참고) REGISTRY_DIR=${REGISTRY_DIR:-/var/lib/registry}

root@admin:~/kubespray-offline/outputs# tree /var/lib/registry/

/var/lib/registry/

0 directories, 0 files

# load-push-images.sh 실행

# 스크립트 실행 전 관련 변수 확인

root@admin:~/kubespray-offline/outputs# echo -e "cpu arch: $IMAGE_ARCH"

cpu arch: arm64

#(옵션) 'registry.k8s.io k8s.gcr.io gcr.io ghcr.io docker.io quay.io' 이외에 추가로 필요한 저장소가 있다면 설정

root@admin:~/kubespray-offline/outputs# echo -e "Additional container registry hosts: $ADDITIONAL_CONTAINER_REGISTRY_LIST"

Additional container registry hosts: myregistry.io

# nerdctl load 할 .tar.gz 파일들

root@admin:~/kubespray-offline/outputs# ls -l images/*.tar.gz

-rw-r--r--. 1 root root 10068768 Feb 15 03:03 images/docker.io_amazon_aws-alb-ingress-controller-v1.1.9.tar.gz

-rw-r--r--. 1 root root 175407403 Feb 15 03:04 images/docker.io_amazon_aws-ebs-csi-driver-v0.5.0.tar.gz

-rw-r--r--. 1 root root 95707259 Feb 15 03:00 images/docker.io_cloudnativelabs_kube-router-v2.1.1.tar.gz

-rw-r--r--. 1 root root 4978225 Feb 15 02:58 images/docker.io_flannel_flannel-cni-plugin-v1.7.1-flannel1.tar.gz

-rw-r--r--. 1 root root 32159259 Feb 15 02:57 images/docker.io_flannel_flannel-v0.27.3.tar.gz

-rw-r--r--. 1 root root 160658472 Feb 15 03:00 images/docker.io_kubeovn_kube-ovn-v1.12.21.tar.gz

-rw-r--r--. 1 root root 73788930 Feb 15 03:05 images/docker.io_kubernetesui_dashboard-v2.7.0.tar.gz

-rw-r--r--. 1 root root 18013963 Feb 15 03:05 images/docker.io_kubernetesui_metrics-scraper-v1.0.8.tar.gz

-rw-r--r--. 1 root root 14991749 Feb 15 03:01 images/docker.io_library_haproxy-3.2.4-alpine.tar.gz

-rw-r--r--. 1 root root 20891493 Feb 15 03:01 images/docker.io_library_nginx-1.28.0-alpine.tar.gz

-rw-r--r--. 1 root root 60177566 Feb 15 03:06 images/docker.io_library_nginx-1.29.4.tar.gz

-rw-r--r--. 1 root root 8986338 Feb 15 03:02 images/docker.io_library_registry-2.8.1.tar.gz

-rw-r--r--. 1 root root 17299815 Feb 15 03:06 images/docker.io_library_registry-3.0.0.tar.gz

# (TS) mac사용자: 아래 내용 추가 후 스크립트 다시 실행

vi load-push-all-images.sh

# 아래 추가 -----------------------

...

load_images() {

for image in $BASEDIR/images/*.tar.gz; do

echo "===> Loading $image"

sudo $NERDCTL load --all-platforms -i $image || exit 1

done

...

root@admin:~/kubespray-offline/outputs# ./load-push-all-images.sh

root@admin:~/kubespray-offline/outputs# nerdctl images

REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE

localhost:35000/kube-proxy v1.34.3 fa5ed2c96dd3 30 seconds ago linux/arm64 78.05MB 75.94MB

localhost:35000/kube-scheduler v1.34.3 985575f183de 30 seconds ago linux/arm64 53.34MB 51.59MB

localhost:35000/kube-controller-manager v1.34.3 354700b61969 31 seconds ago linux/arm64 74.38MB 72.62MB

localhost:35000/kube-apiserver v1.34.3 dece5cf2dd3b 31 seconds ago linux/arm64 86.56MB 84.81MB

localhost:35000/metallb/controller v0.13.9 b724b69a4c9b 32 seconds ago linux/arm64 63.12MB 63.11MB

localhost:35000/metallb/speaker v0.13.9 51f18d4f5d4d 32 seconds ago linux/arm64 111.1MB 111.1MB

localhost:35000/kubernetesui/metrics-scraper v1.0.8 9115322001e6 33 seconds ago linux/amd64 42.26MB 42.26MB

localhost:35000/kubernetesui/dashboard v2.7.0 c353af0aa3a0 34 seconds ago linux/arm64 256.1MB 247.6MB

localhost:35000/amazon/aws-ebs-csi-driver v0.5.0 ff65db28333e 36 seconds ago linux/amd64 456.9MB 444.1MB

localhost:35000/provider-os/cinder-csi-plugin v1.30.0 f4caa8cc697d 36 seconds ago linux/arm64 63.05MB 61.21MB

localhost:35000/sig-storage/csi-node-driver-registrar v2.4.0 9124c121892e 36 seconds ago linux/arm64 22.41MB 20.25MB

localhost:35000/sig-storage/livenessprobe v2.11.0 1b1ba6eb5c8d 37 seconds ago linux/arm64 22.25MB 20.14MB

localhost:35000/sig-storage/csi-resizer v1.9.2 8f191a7ec9cc 37 seconds ago linux/arm64 64.17MB 62.06MB

localhost:35000/sig-storage/snapshot-controller v7.0.2 f23cbdb6dd3f 38 seconds ago linux/arm64 62.28MB 59.56MB

localhost:35000/sig-storage/csi-snapshotter v6.3.2 2ba4692b39fc 38 seconds ago linux/arm64 63.98MB 61.87MB

localhost:35000/sig-storage/csi-provisioner v3.6.2 b34169e8b528 39 seconds ago linux/arm64 67.74MB 65.63MB

localhost:35000/sig-storage/csi-attacher v4.4.2 2aa9f6446ccd 39 seconds ago linux/arm64 63.48MB 61.37MB

localhost:35000/jetstack/cert-manager-webhook v1.15.3 2d91656807bb 40 seconds ago linux/arm64 58.15MB 56.39MB

localhost:35000/jetstack/cert-manager-cainjector v1.15.3 a13418dc926e 40 seconds ago linux/arm64 44.65MB 42.89MB

localhost:35000/jetstack/cert-manager-controller v1.15.3 5114bfbeac23 40 seconds ago linux/arm64 67.13MB 65.37MB

localhost:35000/amazon/aws-alb-ingress-controller v1.1.9 e88f69a74c30 41 seconds ago linux/arm64 37.9MB 37.91MB

localhost:35000/ingress-nginx/controller v1.13.3 68a587e5104f 42 seconds ago linux/arm64 336.3MB 334.2MB

localhost:35000/rancher/local-path-provisioner v0.0.32 4a3d51575c84 43 seconds ago linux/arm64 61.37MB 61.35MB

localhost:35000/sig-storage/local-volume-provisioner v2.5.0 d158fd9f3579 43 seconds ago linux/arm64 134.7MB 130.4MB

localhost:35000/metrics-server/metrics-server v0.8.0 87ccea7af925 44 seconds ago linux/arm64 82.58MB 80.84MB

localhost:35000/library/registry 2.8.1 b1524398e0af 44 seconds ago linux/arm64 25.85MB 25.68MB

localhost:35000/cpa/cluster-proportional-autoscaler v1.8.8 4146047e636f 44 seconds ago linux/arm64 39.98MB 37.86MB

localhost:35000/dns/k8s-dns-node-cache 1.25.0 7071feee8b70 45 seconds ago linux/arm64 90.54MB 88.43MB

localhost:35000/coredns/coredns v1.12.1 e674cf21adf3 46 seconds ago linux/arm64 74.94MB 73.19MB

localhost:35000/library/haproxy 3.2.4-alpine 71268591d942 46 seconds ago linux/arm64 33.57MB 33.37MB

localhost:35000/library/nginx 1.28.0-alpine bcb5257f77e1 46 seconds ago linux/arm64 52.73MB 51.23MB

localhost:35000/kube-vip/kube-vip v1.0.3 133301efa7cb 47 seconds ago linux/arm64 58.69MB 58.69MB

localhost:35000/pause 3.10.1 3f85f9d8a6bc 47 seconds ago linux/arm64 516.1kB 516.9kB

localhost:35000/cloudnativelabs/kube-router v2.1.1 9b0b03a20f4b 48 seconds ago linux/arm64 216.7MB 216.1MB

localhost:35000/kubeovn/kube-ovn v1.12.21 c93b1a2ecea3 50 seconds ago linux/arm64 513.6MB 501.2MB

localhost:35000/calico/apiserver v3.30.6 b15f366465da 51 seconds ago linux/arm64 113.8MB 113.8MB

localhost:35000/calico/typha v3.30.6 3f421c499d47 51 seconds ago linux/arm64 82.42MB 82.4MB

localhost:35000/calico/kube-controllers v3.30.6 7639e6dca06e 52 seconds ago linux/arm64 117.4MB 117.4MB

localhost:35000/calico/cni v3.30.6 0aa5f9cab8de 53 seconds ago linux/arm64 157.3MB 157.3MB

localhost:35000/calico/node v3.30.6 742471336dda 54 seconds ago linux/arm64 401.5MB 400MB

localhost:35000/flannel/flannel-cni-plugin v1.7.1-flannel1 332db17b4c4a 54 seconds ago linux/arm64 11.39MB 11.37MB

localhost:35000/flannel/flannel v0.27.3 3b36a8d4db19 55 seconds ago linux/arm64 102.6MB 101.5MB

localhost:35000/k8snetworkplumbingwg/multus-cni v4.2.2 db44f5c1fd8e 57 seconds ago linux/arm64 497.5MB 495.3MB

localhost:35000/cilium/cilium-envoy v1.34.10-1762597008-ff7ae7d623be00078865cff1b0672cc5d9bfc6d5 f8eeaa2e6cbe 58 seconds ago linux/arm64 206.8MB 201.9MB

localhost:35000/cilium/hubble-ui-backend v0.13.3 1b0a4d764eaf 59 seconds ago linux/arm64 68.89MB 67.14MB

localhost:35000/cilium/hubble-ui v0.13.3 637e4d5a05ac 59 seconds ago linux/arm64 35.56MB 31.92MB

localhost:35000/cilium/certgen v0.2.4 e3403ce43031 59 seconds ago linux/arm64 58.68MB 58.68MB

localhost:35000/cilium/hubble-relay v1.18.6 5c66f4ecc18e About a minute ago linux/arm64 92.61MB 90.86MB

localhost:35000/cilium/operator v1.18.6 7bba55333f30 About a minute ago linux/arm64 258MB 258MB

localhost:35000/cilium/cilium v1.18.6 88b892a5f89b About a minute ago linux/arm64 732.4MB 725.2MB

localhost:35000/coreos/etcd v3.5.26 4b003fe9069c About a minute ago linux/arm64 66.06MB 63.34MB

localhost:35000/mirantis/k8s-netchecker-agent v1.2.2 e07c83f8f083 About a minute ago linux/amd64 5.681MB 5.856MB

localhost:35000/mirantis/k8s-netchecker-server v1.2.2 8e0ef348cf54 About a minute ago linux/amd64 125.8MB 123.7MB

localhost:35000/library/registry 3.0.0 496d3637ba81 About a minute ago linux/arm64 57.52MB 57.34MB

localhost:35000/library/nginx 1.29.4 4c333d291372 About a minute ago linux/arm64 190.7MB 184MB

registry.k8s.io/sig-storage/snapshot-controller v7.0.2 f23cbdb6dd3f About a minute ago linux/arm64 62.28MB 59.56MB

registry.k8s.io/sig-storage/local-volume-provisioner v2.5.0 d158fd9f3579 About a minute ago linux/arm64 134.7MB 130.4MB

registry.k8s.io/sig-storage/livenessprobe v2.11.0 1b1ba6eb5c8d About a minute ago linux/arm64 22.25MB 20.14MB

registry.k8s.io/sig-storage/csi-snapshotter v6.3.2 2ba4692b39fc About a minute ago linux/arm64 63.98MB 61.87MB

registry.k8s.io/sig-storage/csi-resizer v1.9.2 8f191a7ec9cc About a minute ago linux/arm64 64.17MB 62.06MB

registry.k8s.io/sig-storage/csi-provisioner v3.6.2 b34169e8b528 About a minute ago linux/arm64 67.74MB 65.63MB

registry.k8s.io/sig-storage/csi-node-driver-registrar v2.4.0 9124c121892e About a minute ago linux/arm64 22.41MB 20.25MB

registry.k8s.io/sig-storage/csi-attacher v4.4.2 2aa9f6446ccd About a minute ago linux/arm64 63.48MB 61.37MB

registry.k8s.io/provider-os/cinder-csi-plugin v1.30.0 f4caa8cc697d About a minute ago linux/arm64 63.05MB 61.21MB

registry.k8s.io/pause 3.10.1 3f85f9d8a6bc About a minute ago linux/arm64 516.1kB 516.9kB

registry.k8s.io/metrics-server/metrics-server v0.8.0 87ccea7af925 About a minute ago linux/arm64 82.58MB 80.84MB

registry.k8s.io/kube-scheduler v1.34.3 985575f183de About a minute ago linux/arm64 53.34MB 51.59MB

registry.k8s.io/kube-proxy v1.34.3 fa5ed2c96dd3 About a minute ago linux/arm64 78.05MB 75.94MB

registry.k8s.io/kube-controller-manager v1.34.3 354700b61969 About a minute ago linux/arm64 74.38MB 72.62MB

registry.k8s.io/kube-apiserver v1.34.3 dece5cf2dd3b About a minute ago linux/arm64 86.56MB 84.81MB

registry.k8s.io/ingress-nginx/controller v1.13.3 68a587e5104f About a minute ago linux/arm64 336.3MB 334.2MB

registry.k8s.io/dns/k8s-dns-node-cache 1.25.0 7071feee8b70 About a minute ago linux/arm64 90.54MB 88.43MB

registry.k8s.io/cpa/cluster-proportional-autoscaler v1.8.8 4146047e636f About a minute ago linux/arm64 39.98MB 37.86MB

registry.k8s.io/coredns/coredns v1.12.1 e674cf21adf3 About a minute ago linux/arm64 74.94MB 73.19MB

quay.io/metallb/speaker v0.13.9 51f18d4f5d4d About a minute ago linux/arm64 111.1MB 111.1MB

quay.io/metallb/controller v0.13.9 b724b69a4c9b About a minute ago linux/arm64 63.12MB 63.11MB

quay.io/jetstack/cert-manager-webhook v1.15.3 2d91656807bb About a minute ago linux/arm64 58.15MB 56.39MB

quay.io/jetstack/cert-manager-controller v1.15.3 5114bfbeac23 About a minute ago linux/arm64 67.13MB 65.37MB

quay.io/jetstack/cert-manager-cainjector v1.15.3 a13418dc926e About a minute ago linux/arm64 44.65MB 42.89MB

quay.io/coreos/etcd v3.5.26 4b003fe9069c About a minute ago linux/arm64 66.06MB 63.34MB

quay.io/cilium/operator v1.18.6 7bba55333f30 About a minute ago linux/arm64 258MB 258MB

quay.io/cilium/hubble-ui v0.13.3 637e4d5a05ac About a minute ago linux/arm64 35.56MB 31.92MB

quay.io/cilium/hubble-ui-backend v0.13.3 1b0a4d764eaf About a minute ago linux/arm64 68.89MB 67.14MB

quay.io/cilium/hubble-relay v1.18.6 5c66f4ecc18e About a minute ago linux/arm64 92.61MB 90.86MB

quay.io/cilium/cilium v1.18.6 88b892a5f89b About a minute ago linux/arm64 732.4MB 725.2MB

quay.io/cilium/cilium-envoy v1.34.10-1762597008-ff7ae7d623be00078865cff1b0672cc5d9bfc6d5 f8eeaa2e6cbe About a minute ago linux/arm64 206.8MB 201.9MB

quay.io/cilium/certgen v0.2.4 e3403ce43031 About a minute ago linux/arm64 58.68MB 58.68MB

quay.io/calico/typha v3.30.6 3f421c499d47 About a minute ago linux/arm64 82.42MB 82.4MB